Fake Door Testing: How to Validate Feature Demand Before You Build

Developing new product features is often seen as the lifeblood of innovation, yet many teams pour resources into features that ultimately fall flat. In fact, research shows that up to 90% of new product ideas fail to meet user expectations or drive the expected business impact. This staggering statistic highlights a harsh reality for product teams: not all ideas are worth pursuing.

But what if you could test the waters before you fully commit? What if you could know whether a feature would resonate with your audience without the risk of wasted development time and resources? This is where fake door testing comes in: a powerful, low-cost approach that lets you validate demand before investing heavily in development.

In this guide, we’ll break down how fake door testing can help you make smarter decisions, reduce risk, and ensure that the features you develop are truly aligned with your customers’ needs.

The article will give you an understanding of how fake door tests work, how to design them, and what to watch out for to avoid turning your users against you.

What is fake door testing?

Fake door testing (also known as painted door testing) is a lean technique to test the viability of new ideas before committing resources to their development. You present users with a feature that doesn’t exist yet and track who engages with it with minimal investment.

Most users will say yes when you ask if they want a feature. Far fewer will actually click on it. That gap is where most product decisions go wrong.

How is fake door testing different from A/B testing and smoke tests?

Don’t get confused between these three methods. They answer different questions at different stages.

- Fake door testing is for before you build. You’re checking whether anyone actually wants the product/feature yet. You can test it with a UI element and click rate to measure interest.

- A/B testing is for after you build. You have two versions of something that exists, and you need to know which one performs better. It requires data from users and enough volume to be statistically meaningful.

- Smoke tests are neither. They’re internal release checks that verify your build isn’t broken before it goes live. It has nothing to do with user demand.

The simple rule of thumb: if you’re still deciding whether to build something, fake door test results will answer you. If you’ve built it and need to optimize, an A/B test will provide meaningful data. If you’re shipping code, smoke test.

How does a fake door test work (and why)?

In a fake door test, the customer gets an invitation to use a product or a feature. The catch is that the product doesn’t exist yet!

That’s why, when they enter the fake door (be it a CTA button, an in-app notification, or a fake advertisement), they are taken to another page that reveals the truth; the product is not there yet, but could be coming in the future.

<ci of the flow here>

If you have a product idea but are not sure how much traction it can get, the data collected from the fake door test can help you make the call. By looking at metrics like CTR (Click Through Rate), you can gauge user interest and decide which feature or product ideas should go into the product development process.

Apart from giving you the data, a well-executed painted-door test can help you get new customers, spark their curiosity, and increase loyalty and commitment of the existing user base.

When should you use fake door testing?

There are several situations when you might want to consider running such a test before fully committing to development. Let’s look at the most important ones.

Validating a new product idea

There have been too many products that failed because the MVP turned out not to be that viable after all. Conducting painted-door tests allows you to validate demand for product concepts before spending any money on R&D.

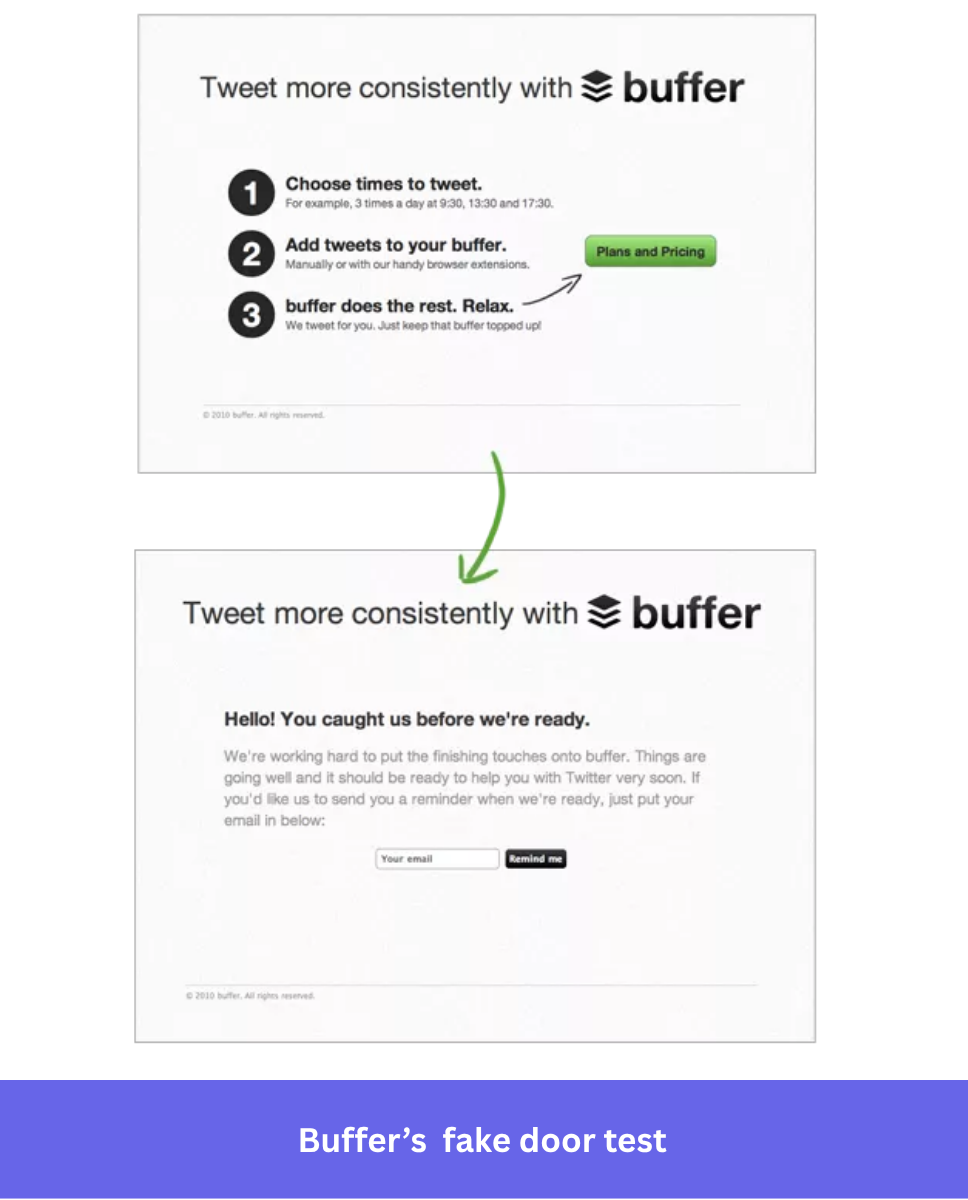

If you would like to see how it works in practice, have a look at the example of Buffer, a social media management tool.

Buffer created a landing page with the details of the product and a ‘Plans and Pricing’ button. This was to assess the initial interest of potential users.

Clicking the button, however, took the users to another page that informed them the product was, in fact, still in development.

There was also an option to sign up for email updates on the page. Doing so would indicate interest in the product.

After introducing this two-step test, the product team was able to validate the product idea and initially only had to invest in those two pages.

Finding your pricing sweet spot

If enough users are ready to commit, fake door tests can also determine what price they are willing to pay, and whether there’s real market demand at that price point.

Buffer did exactly that.

As a second step to the original test, they offered users the option of choosing the pricing plan that was right for them.

Testing a new feature before you build it

Use fake door testing for upcoming features to test your assumptions and avoid building something that’s not worth developing.

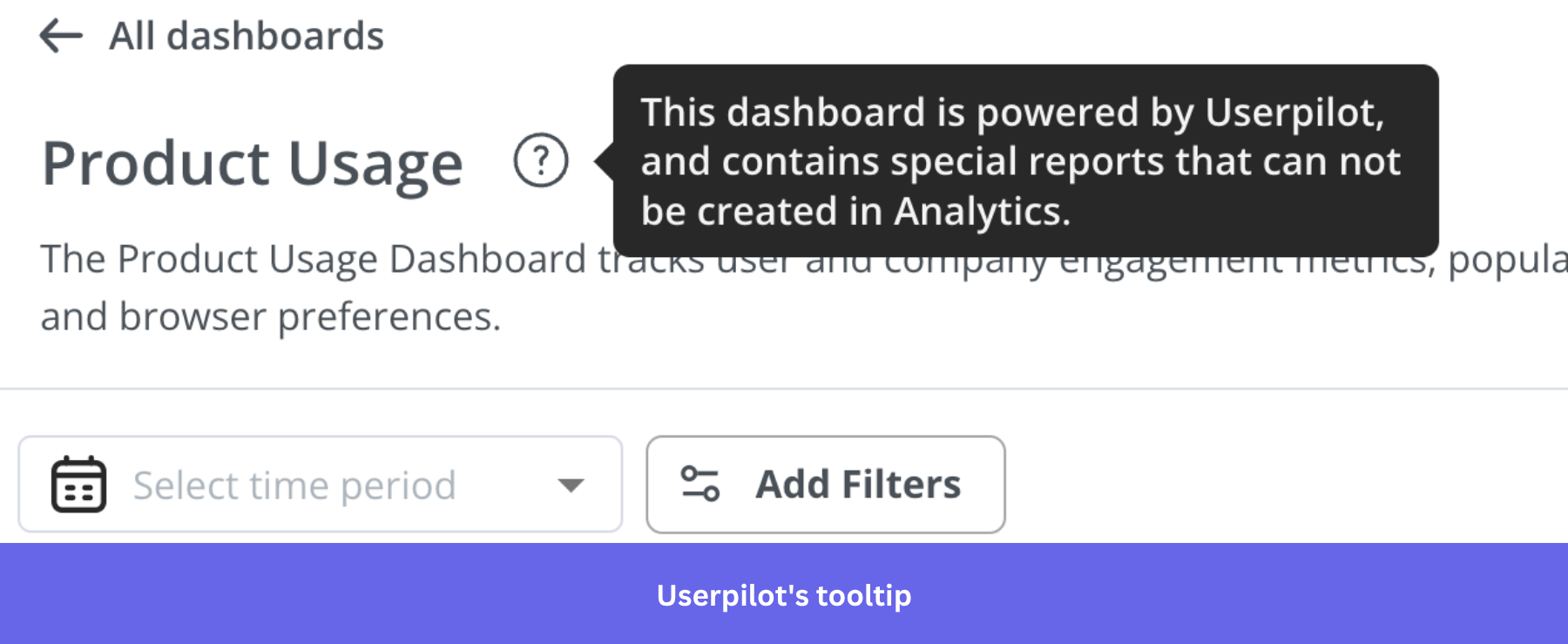

One way of doing it is with an in-app experience, for example, a small tooltip just like the one below.

You would track how many users click on the button to estimate the demand for the potential new feature and assess whether it is worth building.

Building a beta tester list

Fake door tests are also a low-cost way to identify early adopters and offer them early access once the feature is ready.

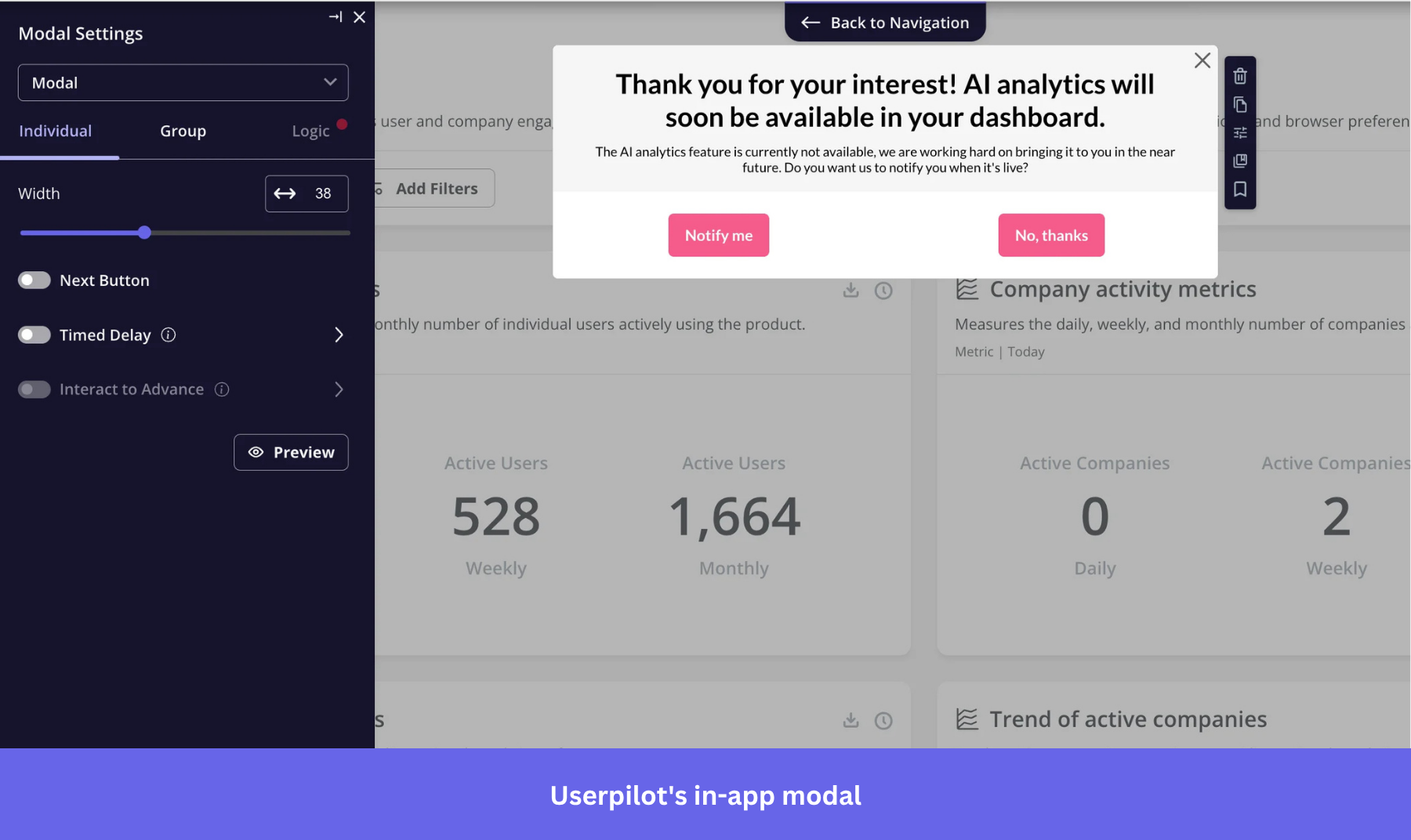

When a user clicks on a fake door, that click is your signal. Instead of just showing them a “coming soon” message, use an in-app modal to invite them to join your beta testing list. They’ve already shown interest. Make use of it.

Once users sign up, keep them updated. If the test fails and you decide not to build the feature, follow up and tell them. It’s a small thing that protects your credibility.

When should you NOT use fake door testing?

Fake door testing is not a universal tool. There are situations where it can backfire badly.

- Skip it if you’re in a safety-critical industry like healthcare, finance, or security. If users form a false impression that a capability exists, the consequences go beyond a bad review. You’re looking at real harm and regulatory risk.

- Don’t use it for pricing or upgrade flows. Implying an offer exists when it doesn’t is misleading. Regulators take that seriously, and so will your users.

- Avoid it for complex, specific features that require multi-step workflows. A single call to action can’t represent a workflow that takes ten steps to complete. Users who interact won’t have a real picture of what they’re signing up for, so the data you collect on user behavior won’t mean what you think it means.

- Be careful in the early stages of a product where trust is everything. One poorly handled fake door test can damage the relationship before it’s built. You don’t want to risk it if transparency is your core asset.

- And watch out for curiosity clicks. If your testing process isn’t targeted tightly, you’ll get noise. People click things out of habit. Pair your click data with a short post-click survey or focus groups to gather insights on why they clicked. That’s how you measure interest accurately and turn clicks into insights gained.

If you can’t make the experience unambiguously honest at the moment of interaction, use usability testing, a beta waitlist, or a concept test instead.

What are the risks of fake door testing (and how to avoid them)?

As we discussed above sometime running painted-door tests carries some risks. The word ‘fake’ is the clue here. Customers could feel cheated and form negative associations with your product, damaging your brand reputation.

To minimize risks and avoid falling short of user expectations, consider the following:

- Always apologize for potential disappointment and distress.

- Reassure them that you are really working on the feature (or seriously considering it).

- Thank them for their time.

- Explain the reasons for your actions.

- This is your chance to gather feedback from them.

- Invite them to take part in the beta testing once the product or feature is ready.

- Stress the value that the new development could add for the users.

- Carefully choose the size of the testing cohort to limit the potential damage (while still getting enough data).

How do you make a fake door test?

If combined with paid ads, you can use fake door tests not only to validate your product idea but also to pitch it to potential customers and expand your user base.

The process consists of 4 key steps:

- Create a landing page to introduce the feature or product.

- Use paid advertisements to drive traffic to the landing page

- Create another page explaining to users that the feature is still in development.

- Track the user behavior and collect data to see how many people visited your website and how many followed the call-to-action.

How to run an in-app fake door test?

We’ve looked at the four steps you need to do and run fake door tests using landing pages like Buffer did.

If you’re looking to run fake door tests in-app, you need to follow these five steps:

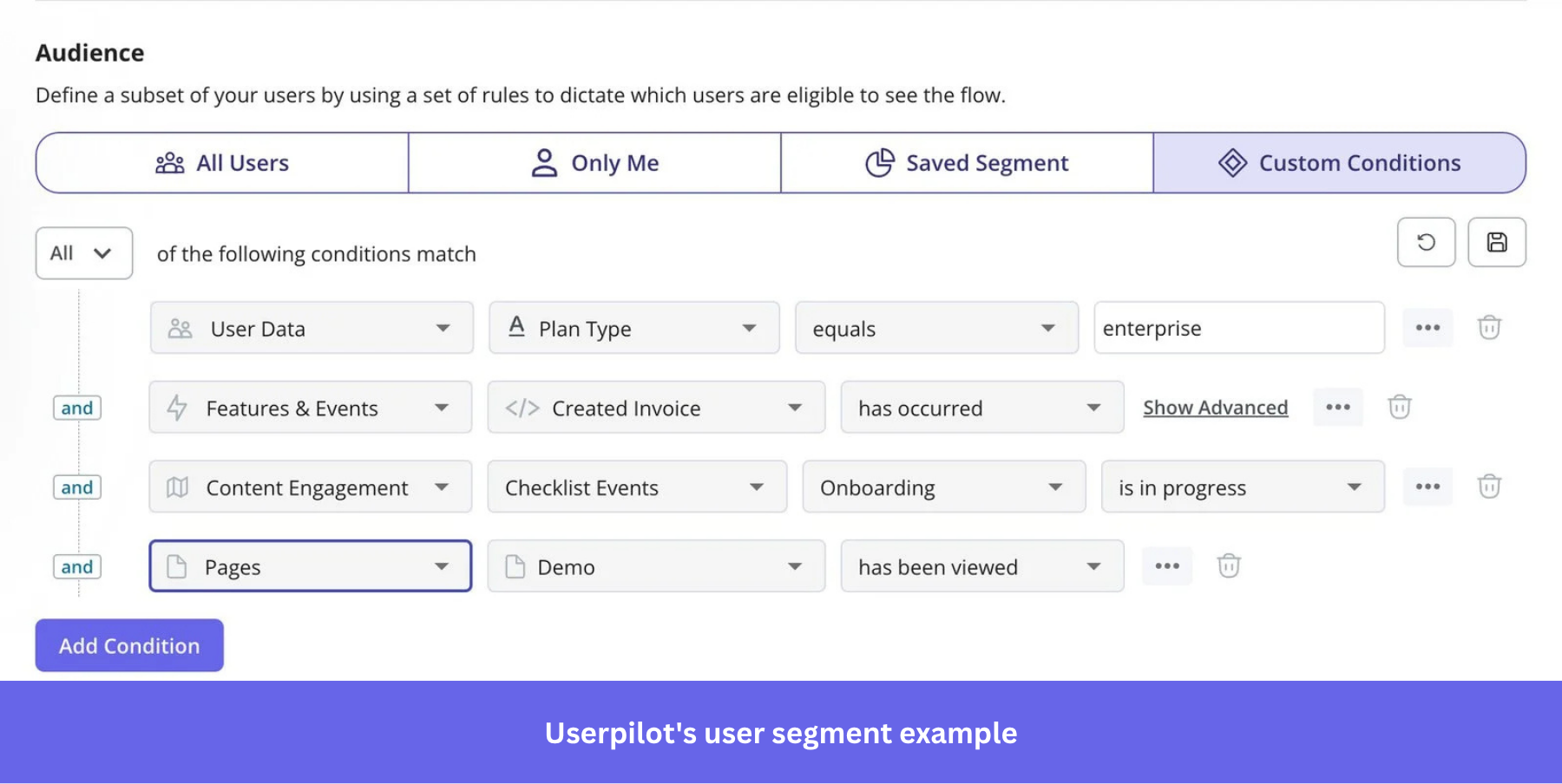

- Segment your target audience using product usage analytics.

- Decide on which in-app page your test should trigger.

- Create the test content (using a modal, a tooltip, or another UI pattern).

- Build the follow-up message and experience.

- Measure the success of your painted-door tests.

1. Segment your target audience using product usage analytics

If you want to test a potential new feature, make the test as relevant as possible. This means selecting the right test audience.

Let’s look at an example.

You’re a backlink-building tool meant for building partnerships and tracking link-building activities. You want to launch an advanced feature that will allow users to build new partnerships by connecting with other partners in the network, not just adding their contacts.

In this situation, you’d want to see if the current users who are already using the partnership feature would be interested in this additional product functionality.

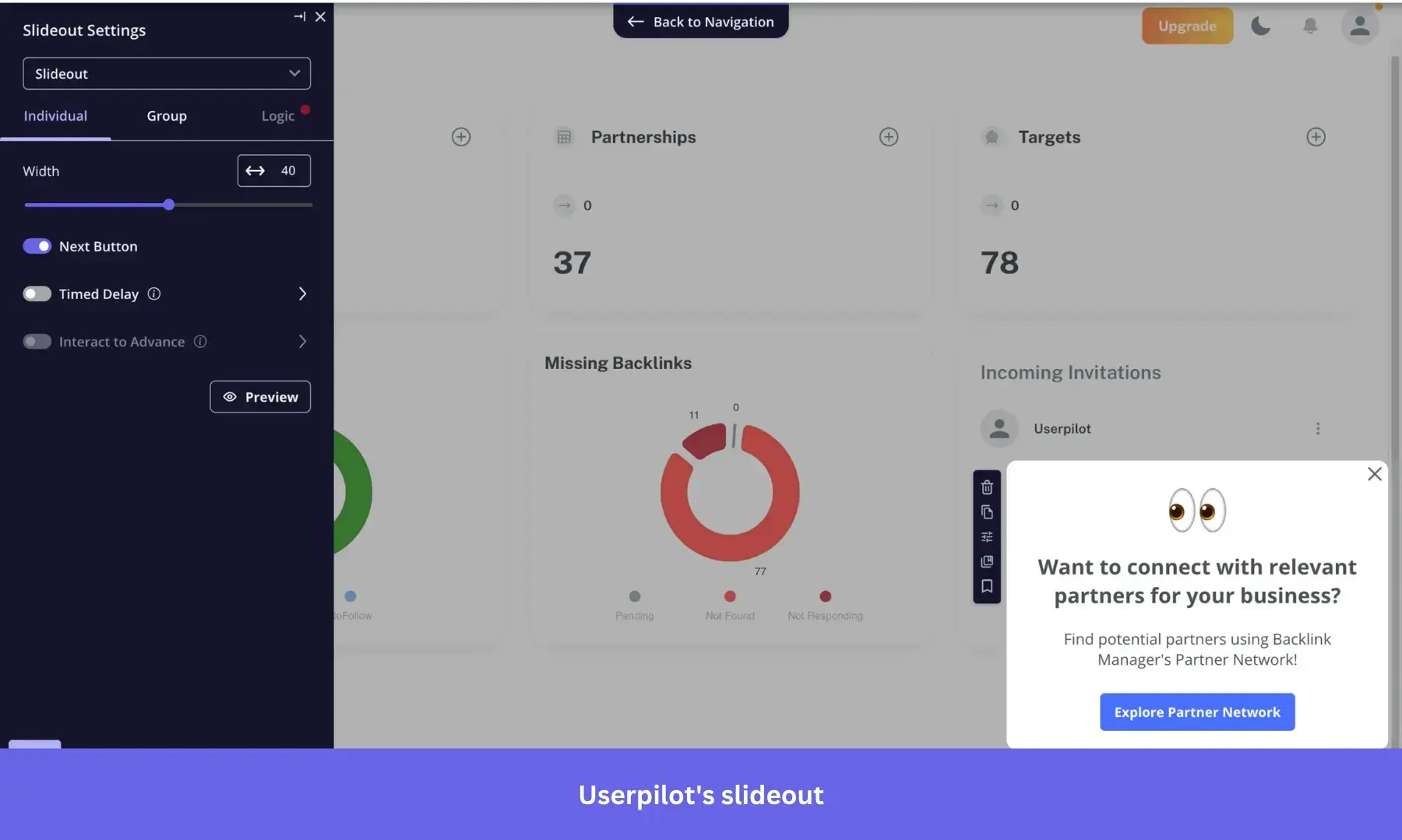

2. Decide on which landing page your fake test should trigger

Next, you need to figure out where in your app to trigger the test and when.

You could use a tooltip like in the example above, or perhaps consider adding a slideout when the user navigates to the Partnership page. The key is to be contextual and show your message where and when it’s relevant for the user.

This will look something like this.

3. Create the fake door test content

This bit could make or break the test.

First, you need to convince the user to engage with the message. To do that, you could indicate what pain points it solves for the user and how it adds value.

Adding screenshots, wireframes, or a product demo video can drive more engagement.

Once the users engage and realize that the product is not yet ready, you must always explain why this is happening and how you are working on it.

This will help you avoid user frustration and disappointment, and perhaps even create a sense of anticipation.

At this stage, you can present a possible roadmap, let them register for updates, or take part in beta testing.

4. Build the follow-up

Following up with the users who have engaged with the feature, signed up for the updates, or volunteered for beta testing is essential.

Keep them in the loop about how the work is going and, most importantly, deliver on your promises.

Otherwise, your credibility will suffer badly.

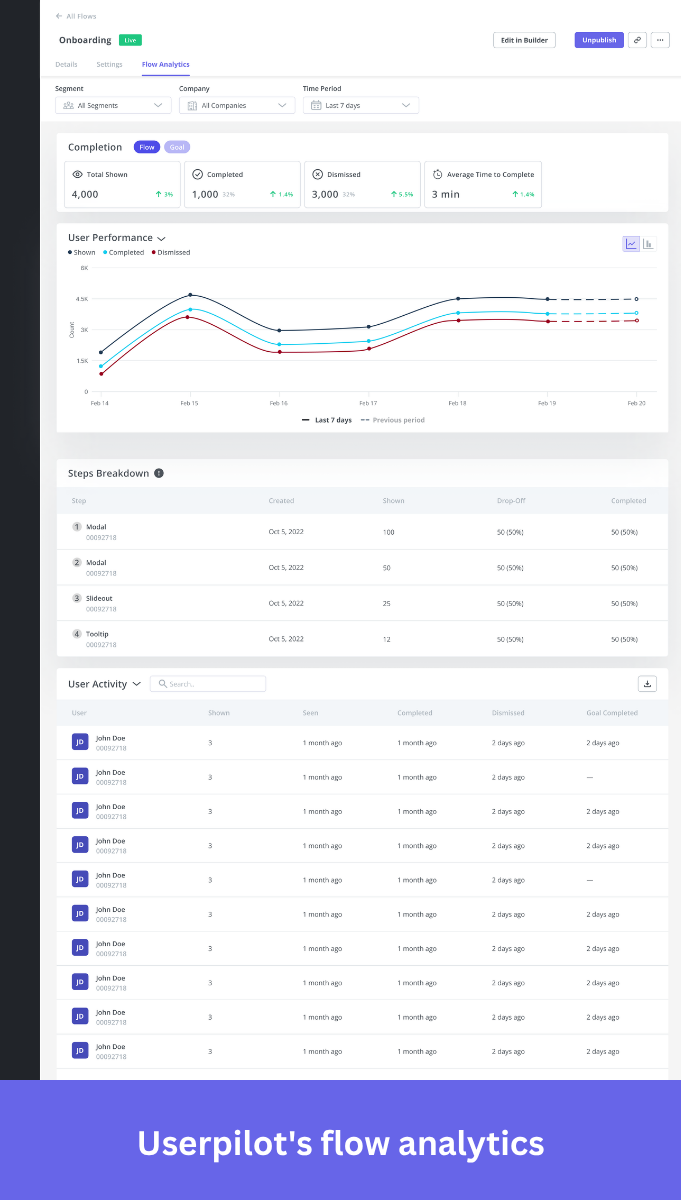

5. Measure the success of your fake door testing

The final step is essential as this is what enables you to make an informed decision on whether it’s worth building the feature or not.

You define what you are looking for even before starting the experiment, so that you can easily determine if the test was a success or not.

Things to track include how many users clicked on your tooltip or modal, and how many of those signed up for a beta test.

Also, look at any feedback you may receive, both positive and negative (but prioritize the data on actual user behavior).