Product strategy in 2026: building for humans today and AI agents tomorrow

How we create a Product Strategy in 2026 is changing rapidly – I don’t want to sound cliche (and this post definitely isn’t – promise!) but AI has knocked over the tablestakes and now – there are two factors that are rapidly re-shaping how we should think about Product Strategy altogether.

The first factor is on the shipping side. AI is writing a serious share of production code now, and the gap between “we should build that” and “it’s live” is closing faster than ever.

Our CEO Yazan Sehwail put the math bluntly: instead of releasing one or two features per quarter, teams are now releasing seven, eight, nine. Every one of those features is something a user has to discover, learn, and decide to keep using. User attention has not 7×’d to match.

The second factor is on the user side, and it’s the one most product strategy docs aren’t addressing yet. A huge share of your future users won’t be humans clicking through your UI. They’ll be agents calling your API on a human’s behalf, and they don’t onboard, they don’t read tooltips, and they don’t generate the click-stream events your analytics tool was built around.

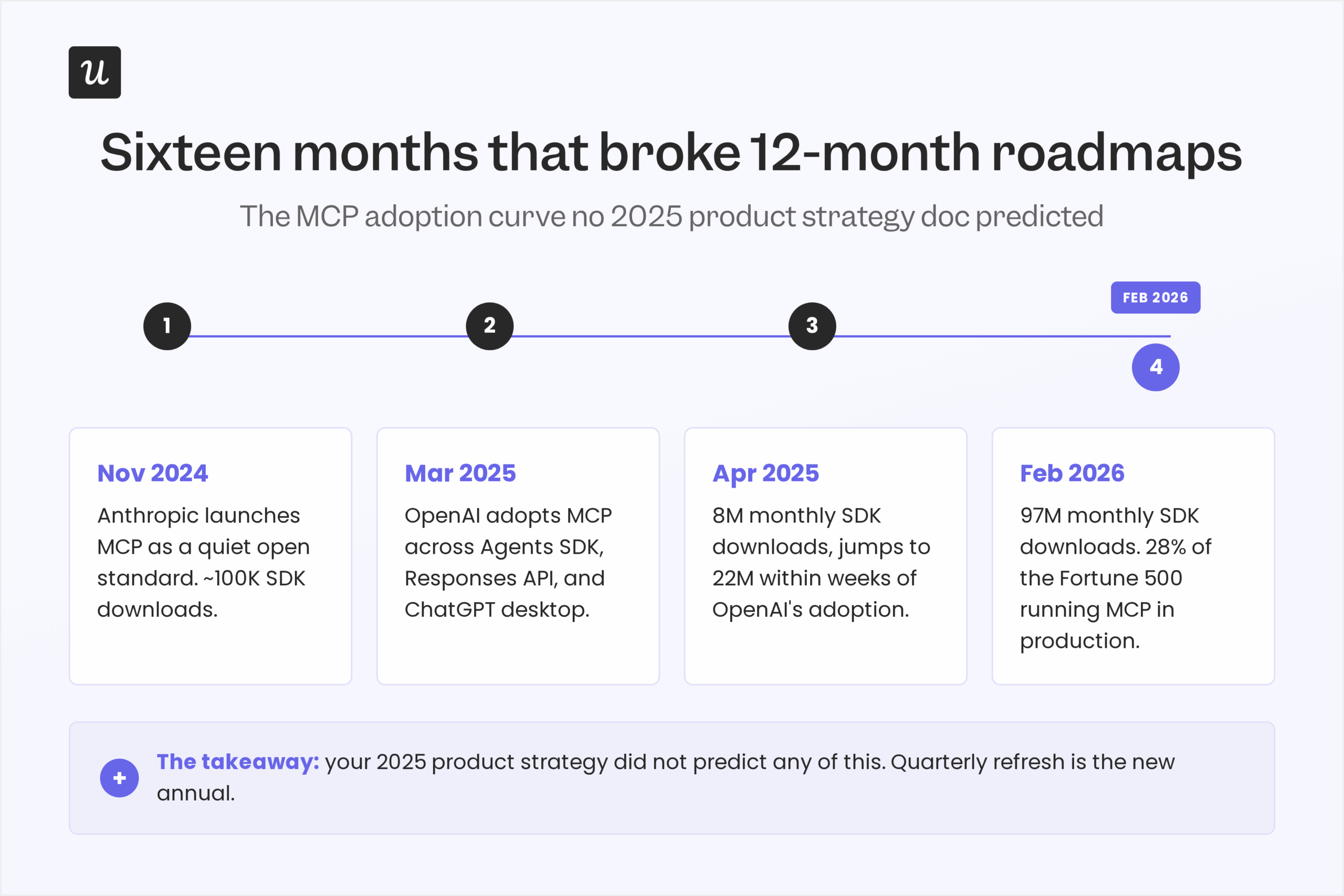

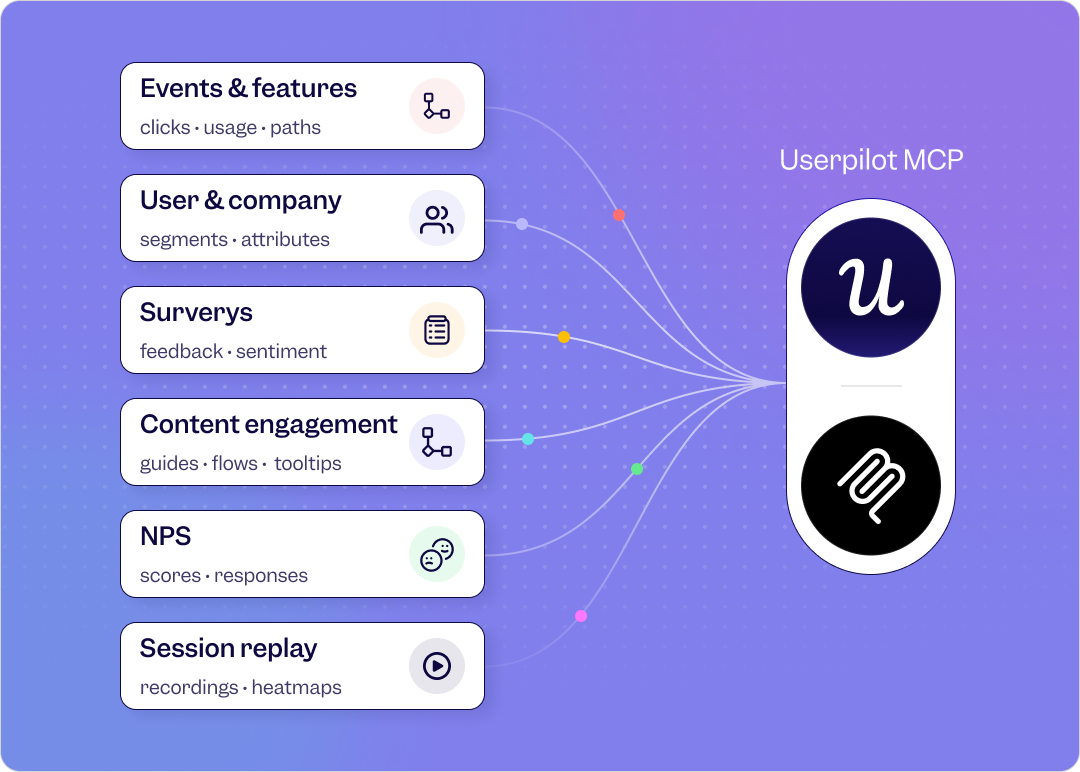

The Model Context Protocol (MCP) went from an Anthropic launch in November 2024 to 97 million monthly SDK downloads sixteen months later. About 28% of the Fortune 500 had MCP in production by Q1 2025.

Both factors bring us to the same conclusion: the product strategy you wrote in January 2026 has, at best, six months of shelf life, and your next one has to plan for two completely different user classes in parallel.

This is something we need to desperately adress in 2026, and what most articles written even 6 months ago are missing:

- We need to reframe the fundamentals (strategy vs roadmap vs backlog) with what AI changed about each

- We’ll dissect exactly why the 12-month strategy doc is dead, and offers a quarterly-refresh model instead

- We’ll discuss the alternatives: the two-stream model: build for humans today and agents tomorrow, in parallel, not in sequence

- We’ll dive into concrete tips on AI feature prioritization, drawing on the worst patterns showing up in product manager Slack channels right now

Product strategy in 2026, summary:

For those of you who don’t have the time to read (or your agents do), here’s a quick summary of this post.

The new definition of product strategy

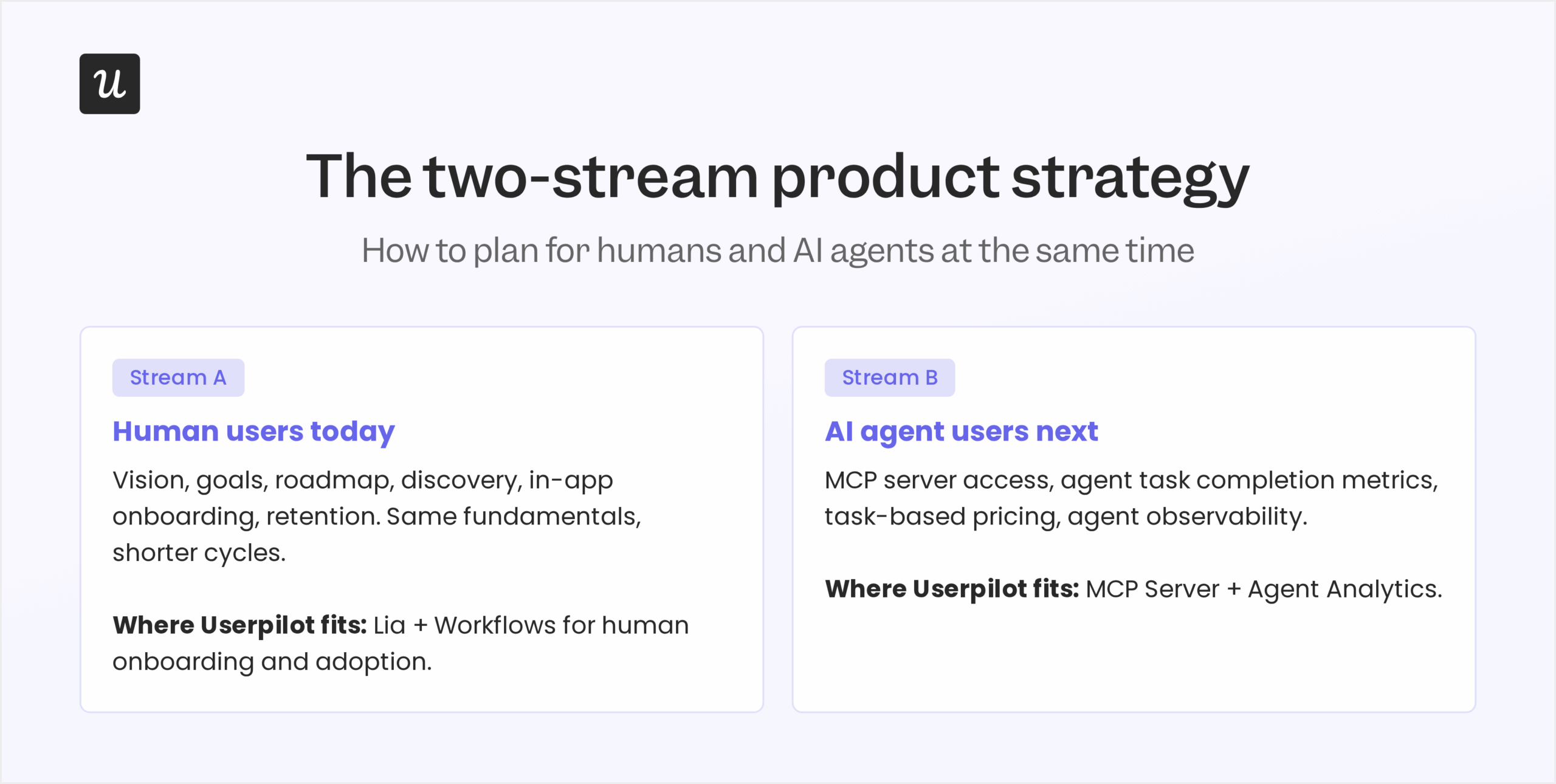

- Product strategy is now a two-stream plan: one stream for human users today, one for AI agent users next, refreshed quarterly instead of annually.

- Strategy, roadmap, and backlog all got shorter and more flexible. The 12-month plan is dead.

- Engineering velocity has 7×’d, but user adoption bandwidth has not, so discovery matters more, not less.

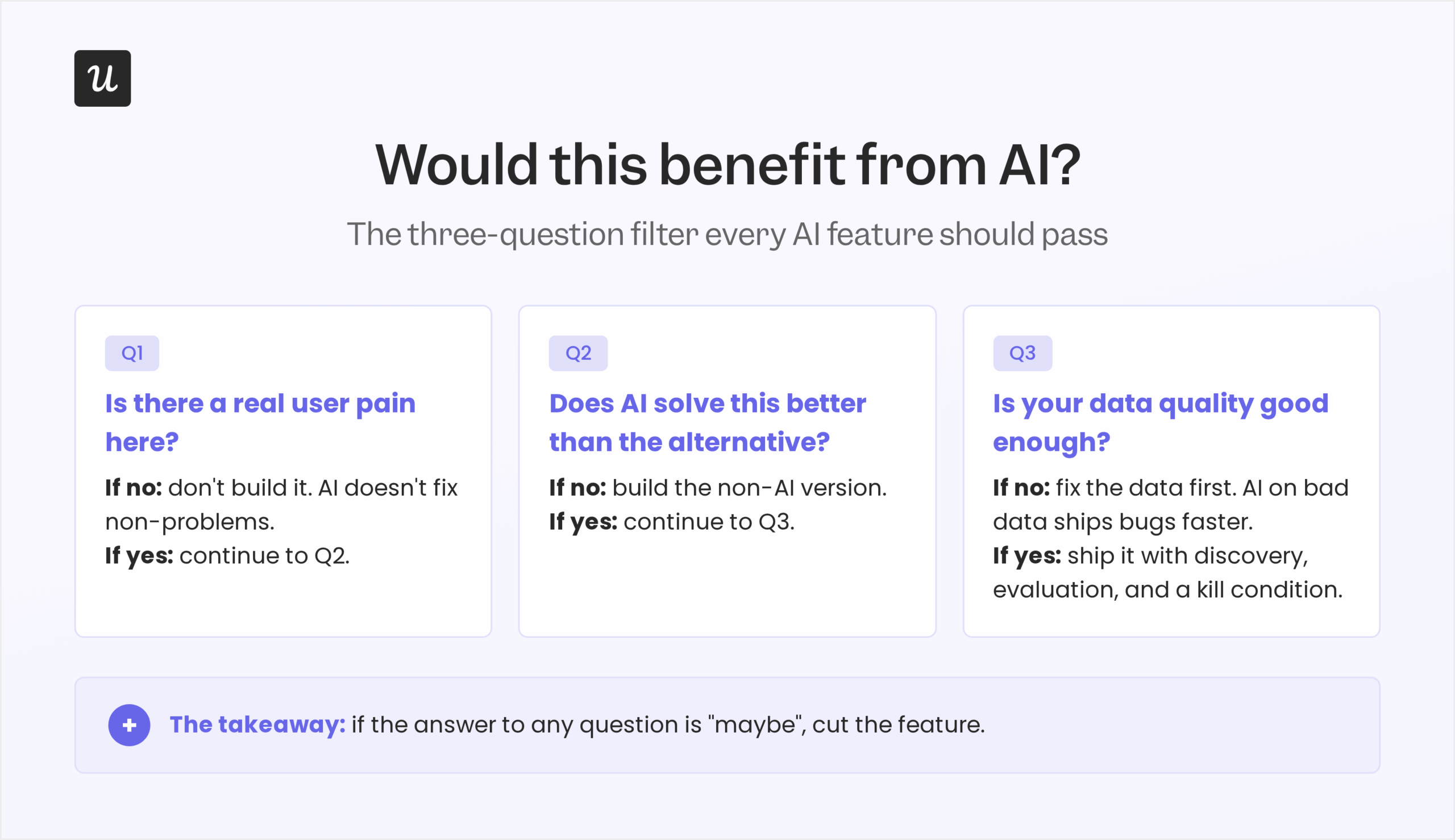

- Marty Cagan’s Value / Viability / Usability / Feasibility lens still applies. “Would this benefit from AI?” is the entry question. Slapping AI on top of an existing feature fails the filter.

Your product strategy for each stream

Stream A: human users today

- Same fundamentals (vision, goals, roadmap, prioritization), shorter cycles.

- Discovery survives the AI hype filter, then ships.

- Onboarding and adoption infrastructure matters more, not less, because shipping velocity outruns user attention.

Stream B: AI agent users next

- Build an MCP server. It’s the access protocol for agent users.

- Measure agent task completion, not click-stream events.

- Pricing and packaging assume task-based, not seat-based, consumption.

Readiness checklist: is your product strategy ready for 2026?

- How long is your current product strategy doc? If it covers more than two quarters, it’s already stale.

- Does it acknowledge agent users? If “agents” doesn’t appear in the doc at all, you’re behind.

- Have you stress-tested every AI feature against the “would this benefit from AI?” filter? If the answer to any feature is “maybe”, cut it.

- Can your roadmap absorb a major MCP-level shift in three months without a full rewrite? If not, the structure is too rigid.

- Does the doc name the key metrics you’ll use to measure success this quarter? If you can’t list three measurable, time-bound outcomes, the strategy is a wishlist, not a strategy.

Product strategy in 2026: what’s changed and what hasn’t

The textbook definition of product strategy still works. A product strategy is the high-level plan that defines product goals, ties them to broader business goals, and lays out how to support them across the product lifecycle. It’s informed by product vision, and it specifies who the target customers are, what unique value proposition the product offers them, and how it will serve them over time. The product leader owns it, in collaboration with senior leadership and cross-functional teams (marketing, sales, customer success) who provide bottom-up input, secure stakeholder buy-in, and execute against it.

What’s actually changed is everything around that definition: the scope, the time horizon, the user set, and the speed of every feedback loop the strategy depends on.

The scope doubled. Two years ago, a product strategy assumed one user class: humans. In 2026, a meaningful share of accounts use your product partly through humans and partly through AI agents, often the same account on the same day. Treating them as one user class with one set of needs produces a strategy that serves neither well.

The time horizon collapsed. The classic 12-month product strategy was built on the assumption that the underlying technology was stable enough to plan a year out. Sixteen months of MCP adoption proved that assumption wrong. Quarterly refresh is the new annual.

The feedback loops accelerated. Engineering velocity went up. Discovery, in-app messaging, and outcome measurement all got faster too. The bottleneck shifted from “can we build it?” to “can we figure out what’s worth building, fast enough to keep up with the build pipeline?”

And one thing that explicitly hasn’t changed: discovery. Marty Cagan, founder of the Silicon Valley Product Group, has been arguing for a decade that good product teams solve hard problems in ways customers love and the business can sustain. That’s still the job. AI didn’t repeal that rule. AI made the rule more expensive to break, because shipping the wrong thing now takes a fraction of the time it used to.

Strategy vs roadmap vs backlog: what AI changed about each

Inside any well-defined product strategy, the classic distinction between strategy, product roadmap, and product backlog is genuinely useful, and AI didn’t break it. What AI did was shorten all three time horizons.

Product strategy is the overarching plan. It outlines product goals and the bet on where the product is going, but stays light on how to deliver them. What changed: in 2026, the strategy now has to name two user classes, not one, and the planning horizon dropped from twelve months to one or two quarters.

Product roadmap details how the strategy gets implemented. It covers high-level milestones, themes, and major features. What changed: roadmaps now include explicit “we don’t know yet” placeholders for upcoming AI and MCP-level shifts, instead of pretending the technology is stable enough to commit to a Q4 release in Q1. Public roadmaps got vaguer for a reason.

Product backlog is the prioritized list of work the team is actively executing on, typically managed via a feature prioritization matrix. What changed: the backlog turns over faster, partly because engineering ships faster, and partly because every new MCP server, model release, or agent capability creates new “should we add this?” entries that cut the line.

If you take only one thing from this section, take this: the further out you commit to specifics, the more likely you are to be wrong. Hold strategy loose at the quarter level. Commit hard at the sprint level. Resist the urge to add a Q4 deadline to a feature that depends on a model release that hasn’t happened yet.

The 12-month strategy is dead. Quarterly is the new annual.

Ask anyone who wrote a 2025 roadmap last January how much of it survived contact with the year. The honest answer, for most product teams, is “less than half.”

The proof point everyone’s quoting now is the MCP timeline. Anthropic launched the protocol as a quiet open standard in November 2024 with around 100,000 SDK downloads. By April 2025, that number had crossed 8 million. After OpenAI announced support across the Agents SDK and ChatGPT desktop in March 2025, monthly downloads jumped from roughly 8 million to 22 million inside weeks. By February 2026, MCP was sitting at around 97 million monthly SDK downloads, with about 28% of the Fortune 500 running MCP servers in production. Gartner now expects 40% of enterprise applications to ship task-specific AI agents by the end of 2026, up from less than 5% twelve months earlier.

No 12-month strategy doc written in early 2025 anticipated that MCP would go from “experimental Anthropic standard” to “the integration layer 28% of the Fortune 500 deploys” inside one calendar year. Most strategy docs from 2025 don’t mention MCP at all.

This is what the new cadence looks like in practice:

- Quarterly strategy refresh. Every twelve weeks, sit with the team and ask: what’s changed in the underlying tech, what new user classes have appeared (agent users, embedded model providers, new API consumers), and what assumptions in our last strategy doc are now wrong?

- Themed roadmap, not dated roadmap. Talk in quarters, not months. Themes hold up. Specific dates evaporate.

- Backlog that absorbs hot drops. Build a “wedge” slot into every sprint for the new MCP, new model, or new integration that wasn’t on anyone’s roadmap last week.

- Documented assumptions. Every strategy doc names the three or four bets and strategic initiatives it’s anchoring on, with the underlying tech assumptions made explicit. When one of those assumptions breaks, the strategy gets reopened, not patched.

If your team can’t refresh a strategy doc in a single working day every quarter, the doc is too long.

Linear, the project tooling company, has been a public proof point for this cadence. Their team has been running on six-week shipping cycles for years and treats the roadmap as a live document, not an annual artifact. Linear isn’t doing anything magical. They’re refusing to plan further out than they can see, and that discipline has been rewarded by every model release and MCP-level shift since.

Stream A: building for human users today

The Stream A part of product strategy looks a lot like 2024 product strategy. Vision, goals, prioritization, customer research, in-app onboarding, retention, monetization. The fundamentals didn’t change. The pace did, and that has second-order effects on every other discipline.

Three things are louder now in the human-stream conversation than they were two years ago.

1. Discovery matters more, not less

This is the single clearest piece of feedback from product manager forums right now, and it’s the part most “AI strategy” decks miss. When a product leader at a small startup asked their leadership team for an AI strategy on r/ProductManagement recently, the most upvoted reply read: “Hope is not a strategy.” A teaching PM in the same thread, with twenty years in the role, was sharper: “Many of these organizations didn’t think about their data strategy even though data is the heart and soul of AI. Many didn’t think about their monetization. Many didn’t think about their product positioning.”

The pattern showing up in those threads, repeatedly: leadership decides AI is the answer before anyone has named the question, and then product managers are asked to back-fill a strategy that justifies the decision.

The good ones push back.

The single best filter we’ve seen, popularised by a senior PM in the same Reddit discussion and consistent with Marty Cagan’s Value / Viability / Usability / Feasibility lens, is the question: “Would this benefit from AI?” If the honest answer is “maybe” or “we should because the CEO wants it”, the answer for the strategy is no.

Lenny Rachitsky has been making the same point from a different angle: a company’s AI strategy needs to be a strategy through and through, not a piece of technology bolted onto an existing product so the company can claim it’s also riding the wave.

This is also where the older discovery techniques earn their seat back at the table: market research, competitive analysis, the 5C framework (Company, Customers, Competitors, Collaborators, Context), usability testing, prototype testing, in-app surveys, and structured feedback collection. They got pushed aside in 2024 in favor of “let’s just ship it and see.” In 2026, the cost of shipping the wrong thing has compressed exactly as fast as the cost of shipping the right thing, and discovery is what tells you which is which. Building a successful product strategy in this environment is an iterative process, not a one-shot artifact, and it moves between research and decision-making every week.

2. Engineering velocity went up. User adoption bandwidth didn’t.

This is the hidden cost of shipping seven, eight, nine features per quarter instead of one or two. A user only has so much capacity to discover, learn, and integrate new behavior. Most product strategies treat shipping velocity as the win condition. In 2026, the win condition is the ratio between what you ship and what users actually adopt.

Yazan, our CEO, made the same point when we sat down: “Now it becomes even harder for product teams to manually have to track each one and understand usage for each one and come up with hypothesis and insights on each one. So you definitely need to automate a lot of this.”

Translated for the Stream A strategy: every new feature launch needs an answer to “how will we know in two weeks if anyone is using this, and what will we do if no one is?” If that answer is “we’ll check the dashboard later”, the feature isn’t ready.

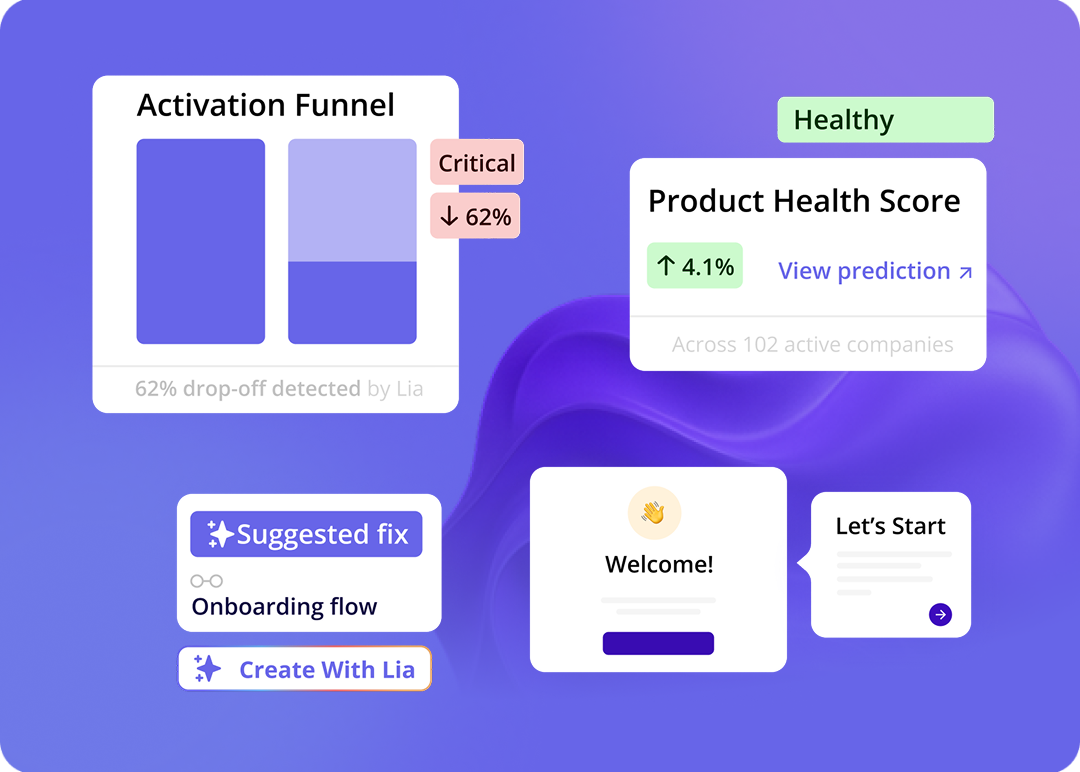

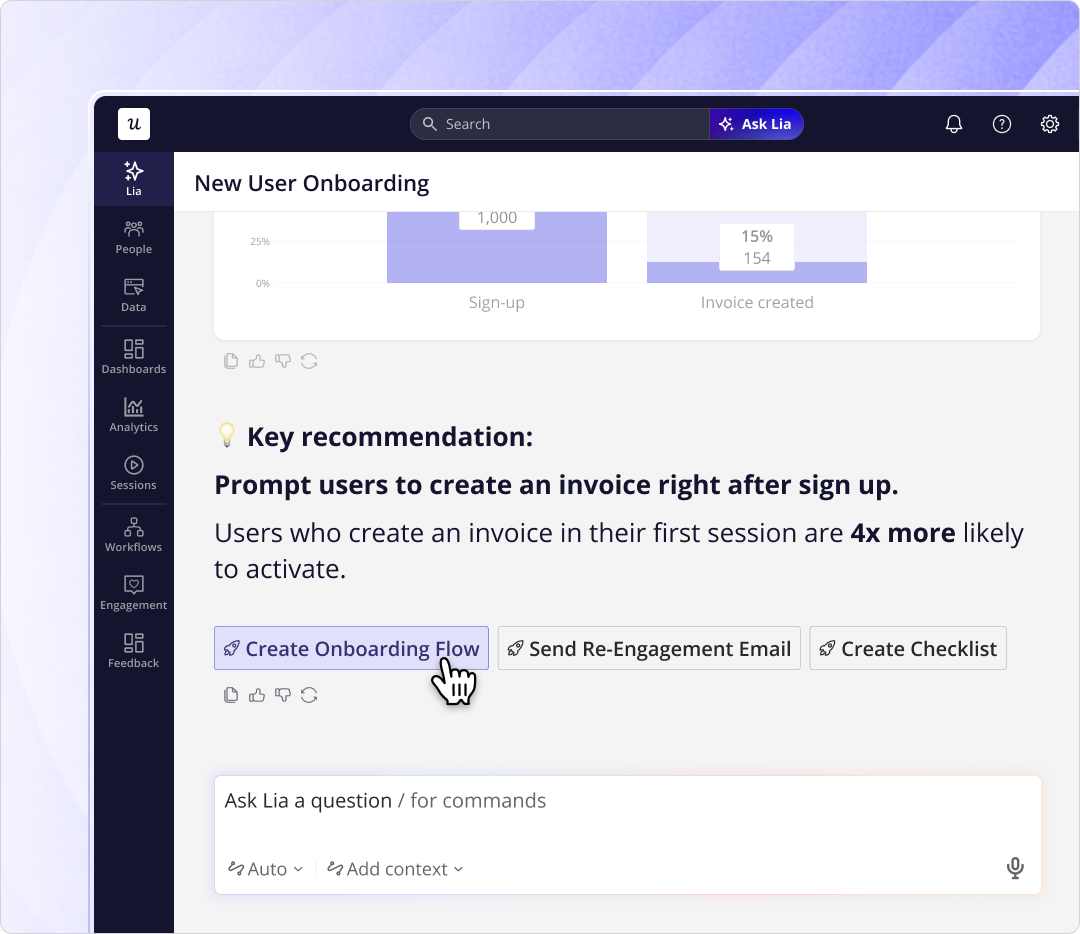

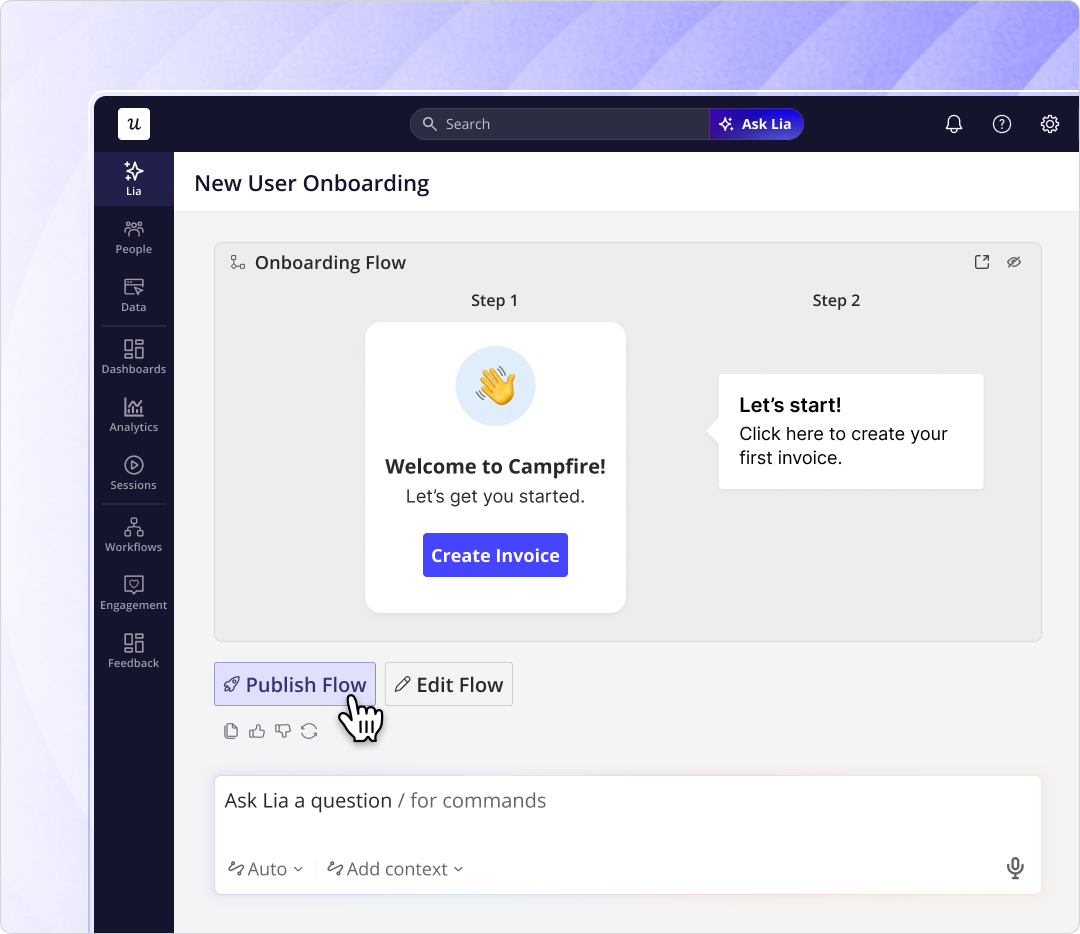

Userpilot’s own AI agent Lia exists to absorb that load. Lia monitors product health, predicts churn, surfaces unusual patterns, and suggests next actions automatically.

This doesn’t mean “Lia replaces the PM.”

Lia handles the operational measurement layer so the PM has time for strategic thinking and discovery.

In the AI era, the PM job moves from operating to monitoring.

3. Onboarding and in-app guidance matter more, not less

If you ship faster, every individual feature gets less ambient attention. The compensating mechanism is in-product guidance, which is exactly the surface area that scales with shipping velocity instead of fighting it. Onboarding stops being something you do once at signup. It becomes a continuous re-onboarding layer that introduces every new feature, in-context, the first time the user is in a position to need it.

Abrar Abutouq, one of our product managers, has been working through this in real time.

When the email feature shipped, the data showed a sharp drop-off at domain verification, the first step required to activate the feature. Engineering didn’t get a ticket. Abrar built a checklist directly inside Userpilot that walked users through verification in-product. The drop-off closed inside days.

Abrar described the framing he uses with the team for these calls: “Once we release a feature, we need to create a report and track meaningful events to see the usage and the feature health. From there, I look for where the drop-off is happening, in which step users are getting stuck. Sometimes it’s not engineering. Sometimes it’s just the in-app messaging.”

The strategic point: when shipping velocity is high, the difference between a feature that lands and a feature that ships into silence is often a single in-app nudge built in hours, not the next sprint’s engineering ticket.

Luckily AI agents like Lia can handle building onboarding flows for you automatically:

Stream B: building for AI agent users next

This is the part of product strategy that most 2025 docs skipped, and the part that will define which SaaS companies still matter in 2027.

The setup: in addition to the human users you’ve always served, a growing share of your accounts now have AI agents acting on the user’s behalf. By the end of 2026, Gartner expects 40% of enterprise applications to include task-specific AI agents. Major model providers have all standardized around MCP as the integration layer.

An agent behaves nothing like a human user, even when the marketing copy calls them “smart users.”

Agents don’t onboard.

They don’t read tooltips. They don’t generate the click-stream events your product KPIs were built around.

They execute tasks and they move on. Your existing UI and your existing measurement system are largely invisible to them, and the metrics built around human behavior will increasingly misread agent-heavy accounts.

Yazan put the strategic implication plainly when we talked about our MCP server: “We see Userpilot as becoming the infrastructure that powers your product usage data for that sort of system. As teams start deploying their own AI agents, those agents are gonna tap on our existing infrastructure that will be powering all of the usage and all the product data, and that’s extremely powerful.”

Whether or not Userpilot specifically becomes that infrastructure for your stack, the strategic question for every product team in 2026 is the same: what is the access protocol your agent users will use to interact with your product, and how will you measure them once they do?

The answer breaks into three sub-decisions every Stream B strategy now has to make.

1. Where MCP wins, and where vertical agents still win

Yazan made a useful distinction here that we’ve been internalizing across the company: “As a whole, companies are gonna be dependent on foundational models because they’re able to connect all of their tools with it. So the rise of MCP and agent-to-agent communication, that’s a game changer. However, specific teams will continue to use those vertical agents as MCP is getting better.”

Translated into plain English: MCP wins for read-heavy queries that cross many tools.

Vertical agents win for write-heavy workflows that live deep inside one tool.

The product strategy choice (the platform strategy choice, in older language) is whether you compete primarily as a destination workflow vendor (vertical) or as a data layer that powers agent workflows in other tools (MCP).

Most B2B SaaS companies will end up doing both, with different weights on each at different times.

Stream B is also the most under-priced market expansion opportunity in B2B SaaS right now – because most product teams are still building only for human users. So whoever ships agent-native infrastructure first – wins.

Notion’s recent repositioning around agent-native workspaces is the public version of this bet.

They’re shipping an MCP surface and a vertical agent stack in the same quarter, on the assumption that the answer for them is “both, with the vertical experience deeper for native Notion workflows and the MCP layer wider for everything else.”

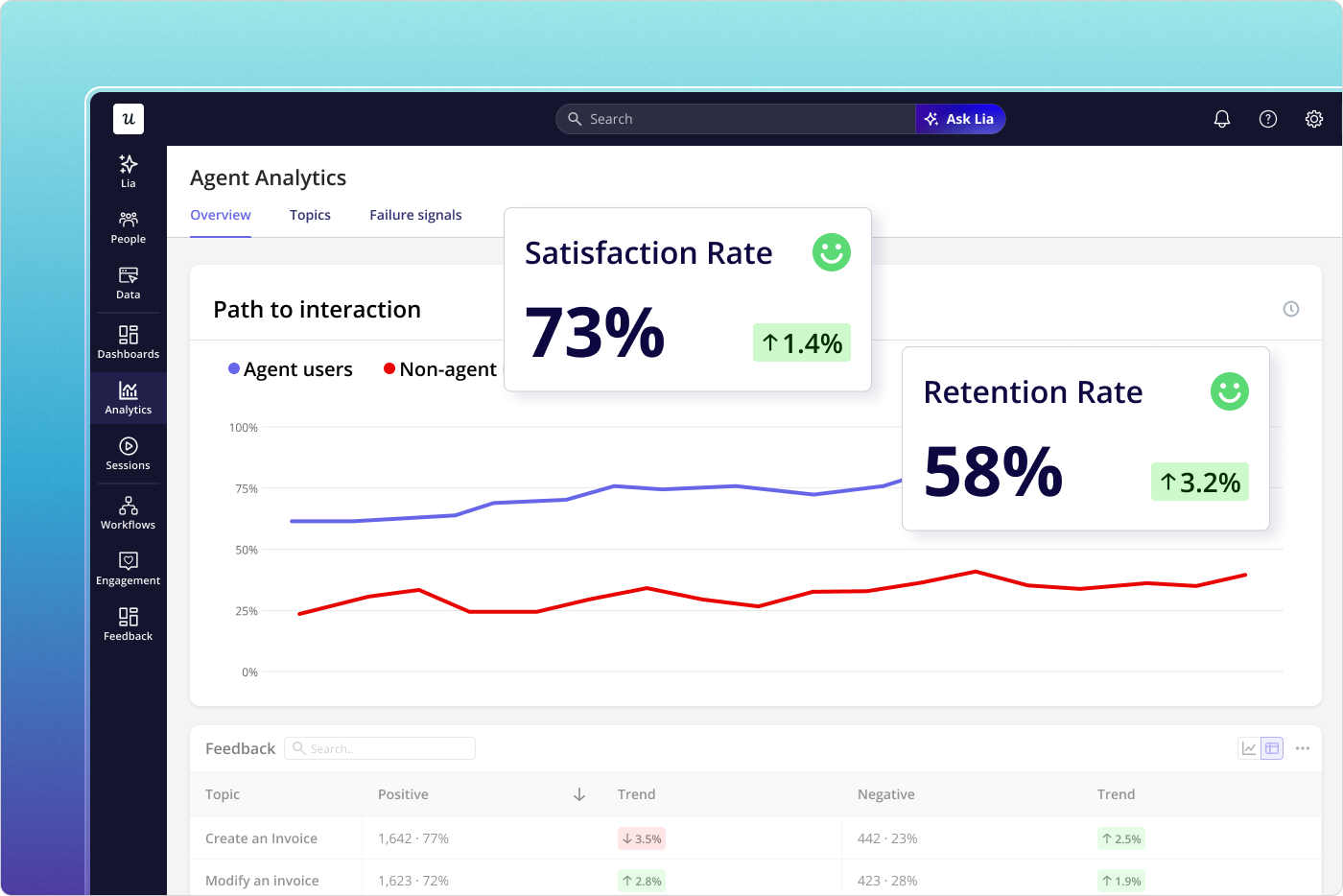

2. The metrics built for human behavior will need to change for agent users

If you’re shipping for agents, the daily-active-users and session-length metrics that have shaped product analytics for fifteen years also need to change.

Agents don’t have sessions. They have tasks. The metrics that matter for the agent stream are different:

- Task completion rate. Of the agent calls into your product, what share completed the intended task?

- Time-to-completion. How quickly did the agent complete the task, end to end?

- Failure mode breakdown. When the agent failed, was it a missing capability, a permissions issue, an authentication issue, or a data-shape mismatch?

- Outcome quality. Of the tasks marked complete, what share actually delivered the human-intended outcome?

This is what Userpilot’s Agent Analytics measures by default:

- agent task usage

- satisfaction rate

- conversation logs

- failure signals

- and outcome quality

All in the same dashboard layer your team already uses for human conversion path analysis and feature usage tracking. If you’re not measuring those four things on your own product yet, that’s the gap to close in this quarter’s strategy.

💡 Read related blog posts: Product analytics in 2026: human signals and agent signals, in two streams

3. Pricing and packaging – stop selling seats!!

The agent-era pricing question is the one most product strategy docs duck.

Seat-based pricing is built on the assumption that the number of users is tied the value created.

But what happens when a single agent runs hundreds of tasks per day on behalf of a single human seat?

Agentic-era pricing is increasingly based on tasks completed, tokens consumed, or outcomes delivered, with the seat as a residual unit rather than the primary one.

Pricing for agent-heavy accounts is already a 2026 problem. Companies like Netlify report that around 80% of new signups are agents. Anchoring your strategy on seat economics in that environment is…just dumb. It’s pricing for yesterday.

How to do this without breaking your team

Two streams, quarterly refreshes, and an AI feature filter add up to a lot of new work for a team that already feels stretched.

Sounds like a recipe for bunout!

Here are three principles have helped us inside Userpilot, and have shown up in the PM forum threads where people are figuring this out in public.

1. Don’t try to run both streams with the same people every day

The Stream A discipline (human users, in-app guidance, onboarding, traditional product analytics) and the Stream B discipline (agent users, MCP, task completion metrics, agent observability) are different jobs that share a strategic backbone. Most product teams that try to make every PM equally good at both end up with PMs who do neither well.

The pattern that’s working: keep most of the team focused on Stream A, where most of the revenue still lives, and stand up a small group (often one PM, one engineer, sometimes one designer) focused on Stream B. The two groups share the same strategy doc and meet weekly to swap signal, which keeps stakeholder alignment tight without either stream getting starved of context. The Stream B group gets explicit air cover to ship things that don’t immediately convert, because that’s the bet.

2. Automate the monitoring layer, keep the judgment human

This is the lesson Yazan landed on while building Lia. The first version of Lia was an in-product assistant: you ask it to build a report, it builds the report. The second version, after a December rebuild, was different.

Yazan described the pivot bluntly: “You go in, you create a project, you tell it what you want, and it should do the rest. You’re no longer operating. The AI is operating. You’re just basically evaluating and monitoring the agent workflow.”

Lia builds in-product flows autonomously, freeing the PM to focus on strategy and discovery instead of operating dashboards.

The same principle applies to your product strategy. Automate the operational measurement layer so the team doesn’t burn its judgment on dashboard maintenance. Reserve the human judgment for the parts that actually need it: which feature deserves a sprint, which experiment counts as a positive result, which trade-off is worth making.

3. Kill features faster than you ship them

Engineering velocity creates clutter as fast as it creates value.

The product strategies we’ve seen survive 2026 are the ones that include a deliberate sunset cadence. Every quarter, the team identifies the bottom-quartile features by usage, and either fixes them, sunsets them, or absorbs them into a more-used flow.

Without discipline, your product will turn into a fossil of half-adopted features that confuse new users and slow down every future release.

As Abrar put it: “It’s about checking the data, especially the reports and dashboards related to that feature. We have a process: once we release a feature, we need to create a report and track meaningful events to see the usage and the feature health. From there, I look for where the drop-off is happening, in which step users are getting stuck.”

That’s the daily form of the same principle. The strategic form: every feature on the roadmap should have a documented kill condition before it ships.

Where product strategy is heading

The two factors that opened this post (engineering velocity outrunning user attention, and the rise of agent users) are not making our lives easier. They’re making it harder.

The shipping side is going to keep accelerating. The cost per engineering-hour-equivalent of building a feature has been falling for two years and shows no sign of stabilizing. Every product team should expect that the gap between “we should build that” and “it’s live” continues to compress.

The user side is going to keep diverging. The agent share of API calls into mainstream SaaS products will grow, the variety of agent types will multiply (proactive agents, scheduled agents, multi-agent workflows), and the metrics that distinguish a good agent integration from a broken one will mature into their own discipline. Customer success teams will start building agent-specific health scores alongside their existing human ones.

Here are some strategic implications for the product team:

- The product strategy doc gets shorter and lives longer. A two-page doc, refreshed quarterly, beats a forty-page doc revised annually. The forty-page doc is a fossil before the year is out.

- Discovery becomes the core competitive advantage. Anyone can ship. The teams that win in 2027 are the ones that ship the right thing, faster than the alternative.

- Agent observability becomes table stakes. If you don’t know how agent users are using your product, you don’t know what your product is. Add agent task completion to your event taxonomy this quarter.

- The PM job moves from operating to monitoring. The PMs who win are the ones who let AI handle the operational layer, and reserve their judgment for the parts only humans can call.

If you’re rewriting your product strategy this quarter (and you should be), the first question to put on the whiteboard is the one this post opened with: who are your users now, and how many of them are humans?

💡 Read related blog posts: Product-led growth in 2026 and Product marketing in 2026 both extend the two-stream model into PLG and PMM strategy.