Product Usage in 2026: How It’s Shifting in the AI Agents Era [Ultimate Guide]

Product usage is changing rapidly in 2026 and we can’t keep having the “same old, same old” conversation about it – right now, somewhere in your product usage data, an AI agent is completing a task your “regular” product usage dashboard will never record.

Agents don’t click. They don’t open sessions. They don’t trigger the events your analytics tool was built around.

And they’re not an outlier: at Netlify, 80% of new signups are now agents, not humans.

For most of SaaS history, “user” meant a human moving through a UI. DAU, session length, feature adoption rate, retention curves: every metric we built assumed that SaaS products are used by human users. But now a new user has entered

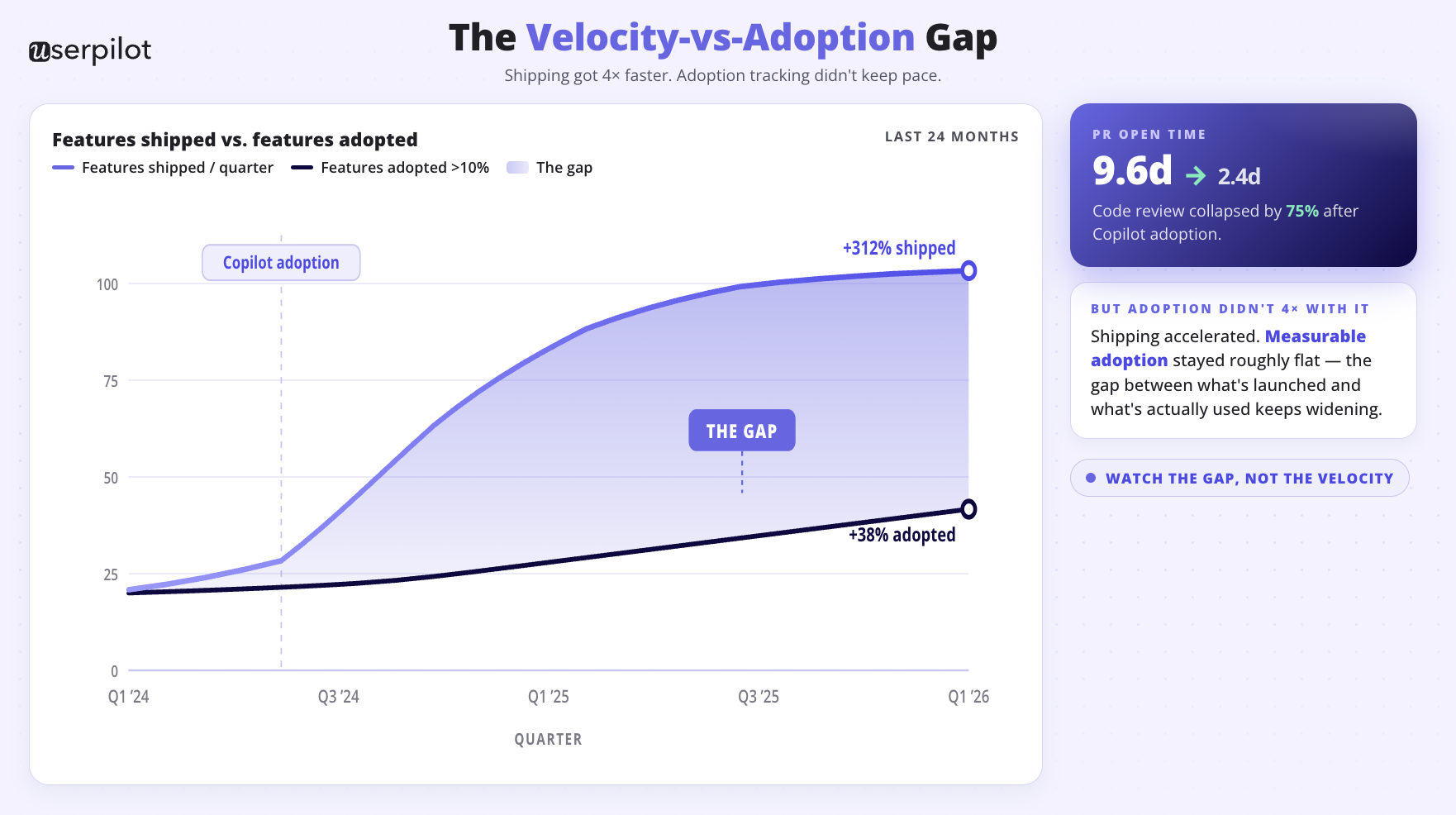

The human product usage has changed too. GitHub Copilot has cut PR open time from 9.6 days to 2.4 days. Features ship four times faster than two years ago, and product analytics hasn’t kept up. Most teams are sitting on a backlog of shipped features no one has properly measured, on top of an entire user class (agents) no one is measuring at all.

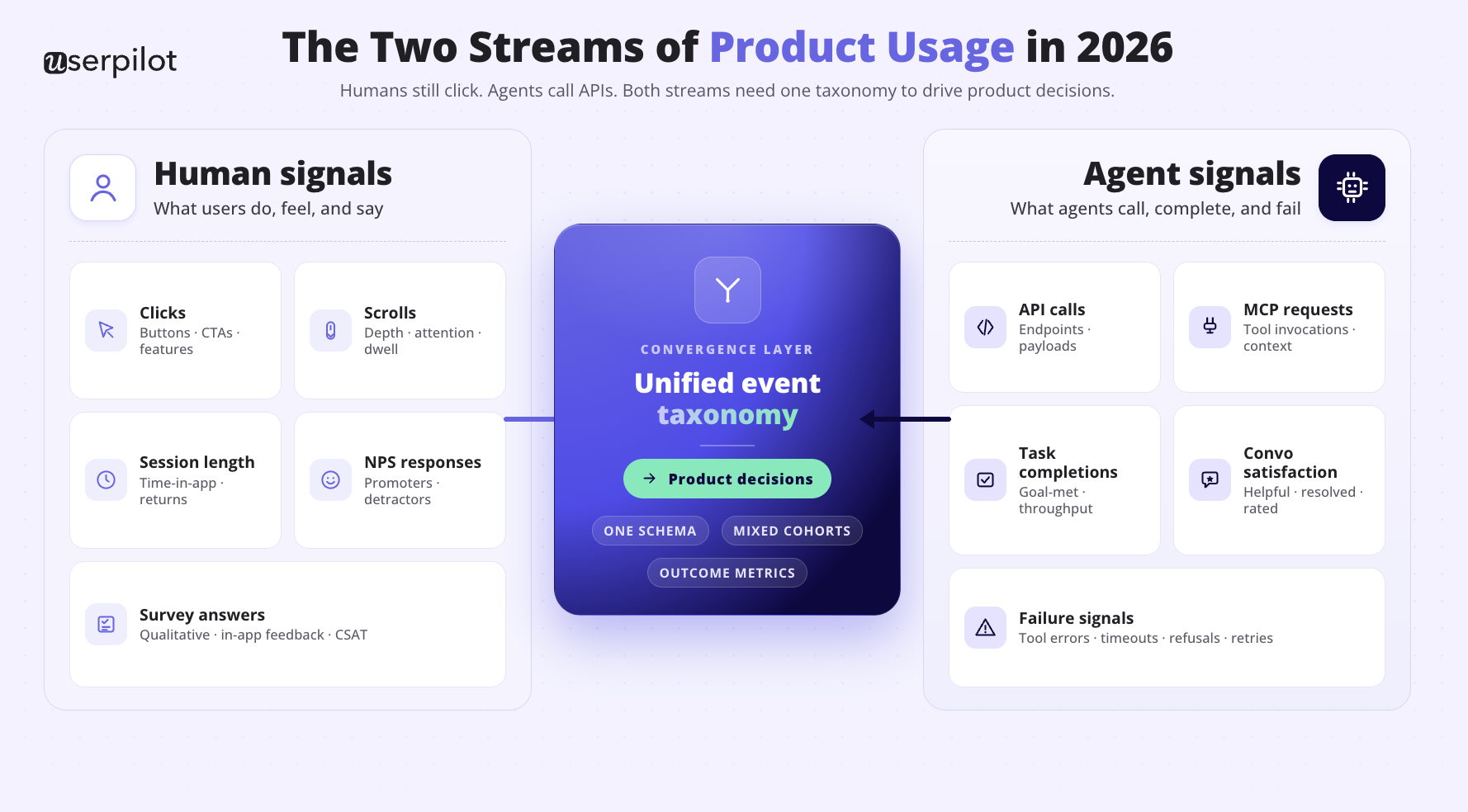

So there are now two streams of product usage: human signals and agent signals. Most teams are tracking only the first one, and usually half-heartedly. The teams fixing this fastest will read their own product more accurately than anyone still running a 2023 analytics setup.

So I wanted to write something more useful than another generic product usage metrics post.

This guide does four things:

- it breaks down what to actually track on each stream, calls out the metrics that are becoming irrelevant, and what metrics you should track instead (both for Agentic users and human users)

- it gives you a actionable advice on how you should be now tracking both human and agentic product usage

- it gives an insight which tools you now need to monitor your product usage for these two types of users – which features you should be looking for in Product Analytics tools 2.0. + what we’re shipping at Userpilot for Agentic Analytics this year.

Product usage in 2026: it’s not one stream anymore, it’s two

Product usage data has always been about one thing: how customers actually behave inside your product, as opposed to what they tell you they do. That core hasn’t changed. What has changed is who counts as a customer.

For most of SaaS history, “user” meant a human moving through a UI. Every metric we built (DAU, session length, feature adoption rate, time on page) assumed that user. Every analytics product on the market is still optimised for it.

That assumption has been quietly breaking since MCP went mainstream. As our CEO Yazan Sehwail puts it:

“Using session replay, NPS data, survey data, and product usage data, you’re able to get your answer without having to go to Userpilot, without having to pull data and upload it to someone. This is why MCP is a game changer.”

The implication for product usage: the next user reading your data, querying your features, or completing tasks inside your product is going to be an AI agent acting on behalf of a human.

And that agent leaves a completely different footprint than the human did.

So when we talk about product usage now, we’re talking about two parallel streams of behavioural data, not one. The teams that get this right will instrument both. The teams that don’t will keep optimising for a user that’s quietly becoming the minority. (Btw. if you want to read more about the full lifecycle frame and diagnostic triage framework this article builds on – read Product analytics in 2026)

The human product usage stream: what’s still worth tracking, and what to stop

If you already know how to measure human product usage, skip to the next section. The fundamentals haven’t changed; the weighting has.

Here’s what still earns its keep:

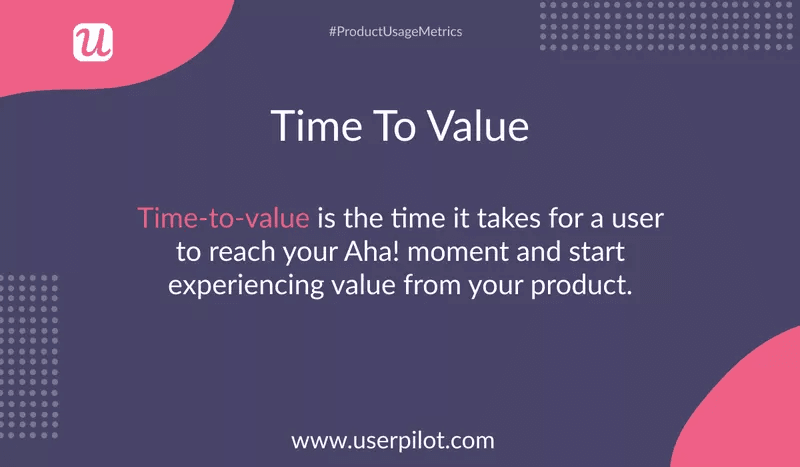

Time to value

This is the gap between signup and the moment a user reaches their first meaningful outcome. It is the single highest-leverage metric in any product-led motion because it predicts everything downstream: activation, retention, expansion. Time-to-value benchmarks have collapsed: under ten minutes used to be acceptable for B2B, now leading PLG products deliver value on first touch, before account creation.

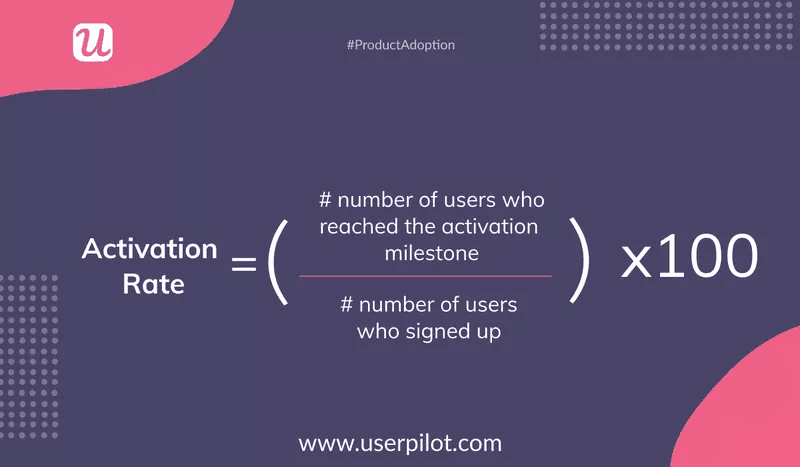

Product activation rate

The percentage of signups who hit your activation milestone. This is where most teams discover that their onboarding works for the people who would have figured it out anyway, and fails the rest. Top-quartile PLG companies hit 40 to 60% activation. Best-in-class hit 70%+. The painful part: activation is tracked by only 34% of PLG companies. If you’re not measuring it, you’re not improving it.

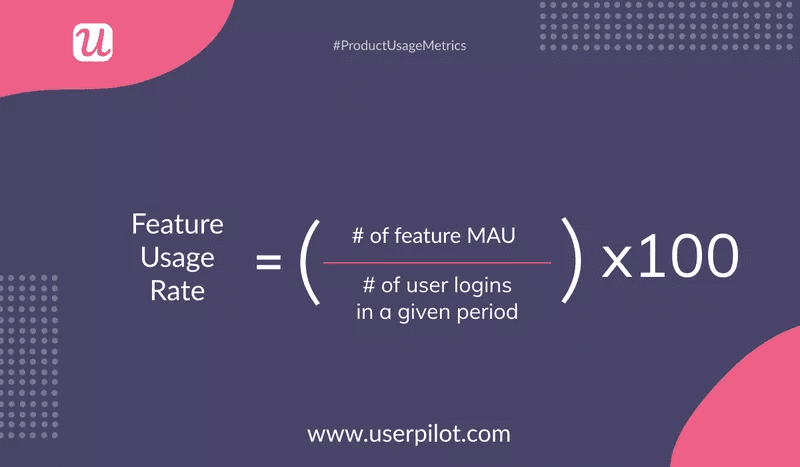

Feature adoption rate

The percentage of active users who actually use a specific feature. Useful for one specific question: did this feature land, or did it ship into silence? Track it for every feature release; cut the ones that never crack 10% adoption six months in.

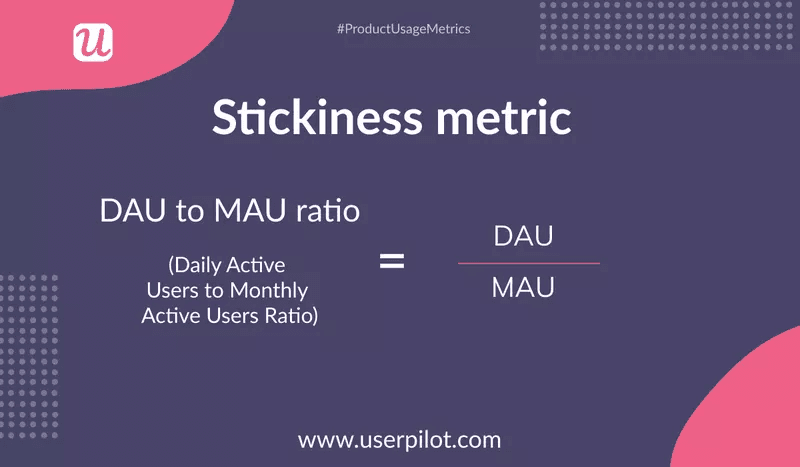

Product stickiness

How often users return inside a given period. Stickiness is one of the cleaner predictors of renewal because habit formation tends to precede expansion.

What I’d stop paying so much attention to in 2026

Two metrics get more attention than they deserve in the human stream, and both fall apart even faster once agent traffic enters the mix.

DAU/MAU ratio. A B2B user who logs in once a week to run a high-value report is worth more than one who logs in daily to check a dashboard they ignore. The DAU/MAU stickiness benchmark is around 20% for most B2B SaaS, and chasing it will pull you toward feature decisions that maximise login frequency rather than outcomes.

Session length. A long session can mean deep engagement or deep confusion. Without context, it’s noise. The number you actually want is “time to outcome,” not “time in product.”

The agent product usage stream: the second user most teams aren’t tracking yet

This is where the rewrite gets interesting, because almost no one is tracking it well.

An “agent user” is any AI system, whether it’s a third-party agent like Claude or ChatGPT querying your product through an MCP server, an internal agent your customer built on top of your APIs, or an autonomous workflow chained through Cursor or Cowork. From your product’s point of view, these agents look like API calls, MCP queries, and task completions. They don’t generate clicks. They don’t open sessions in any normal sense. They don’t fill out surveys.

And they’re already a meaningful slice of traffic. Wes Bush, the founder of Product-Led, has been tracking early indicators: at Netlify, 80% of new signups are AI agents, not humans. PLG 3.0, in his framing, is exactly this shift: products built for AI agents first, humans second.

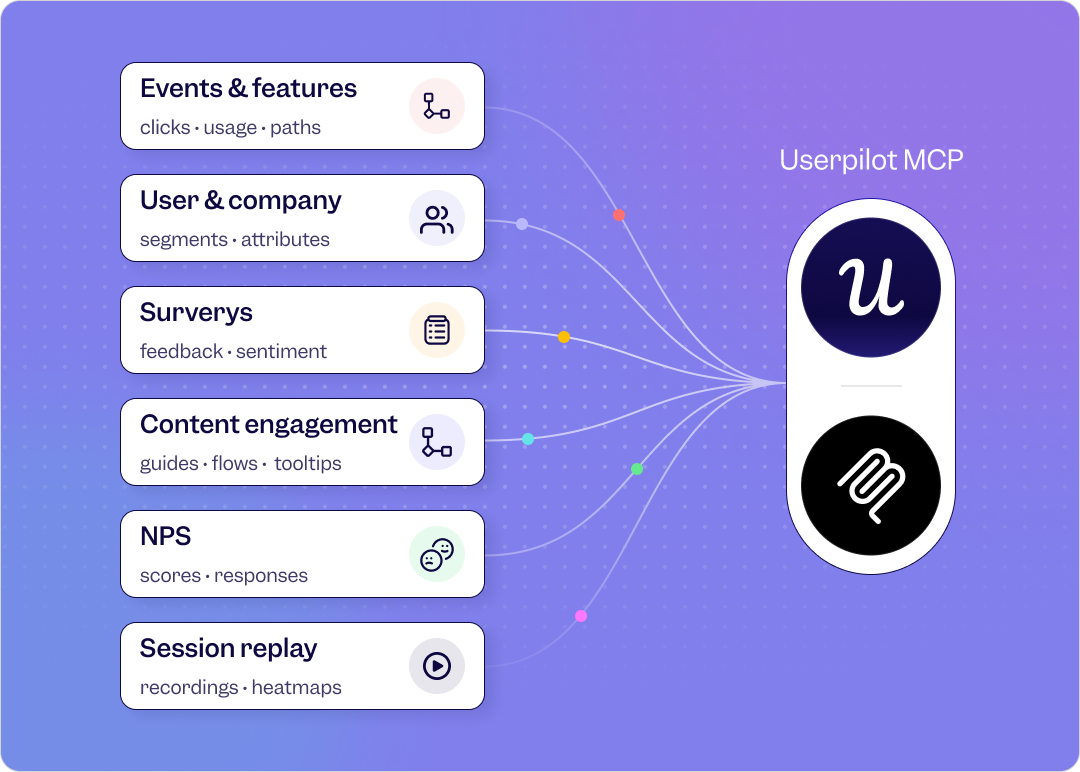

Userpilot’s CEO Yazan Sehwail put it more directly when we talked about why Userpilot is investing so heavily in the MCP layer:

“Today, the first thing you fire up when you start your day is probably Slack. You’re probably opening your Slack before you open your email, and the reason is Slack became the centre of work. The same is going to happen with a platform like Cloud Code and Cloud Cowork. If this is happening, then it is very, very important that such platform has context around the key areas of your business: your CRM, your email, your communication, your storage, your documentation. For us, we see Userpilot as becoming the infrastructure that powers your product usage data for that sort of system.”

Read: foundational AI tools are becoming the surface where work happens, and your product usage data needs to be queryable from inside those tools, not trapped in your dashboard.

What agent product usage actually looks like

The agentic-observability community has spent the last year building out the metric set that actually matters when your user is an AI. The most useful frameworks I’ve found are Galileo’s agent evaluation framework and Arthur AI’s 2026 playbook. Both converge on a similar set of measurements:

- Task completion rate. The percentage of agent runs that finish successfully without human intervention. This is the agent-side equivalent of activation.

- Resolution rate. The percentage of agent conversations or queries that end in a successful outcome (issue resolved, question answered, action completed).

- Satisfaction score. Either explicit (the human approves the agent output) or implicit (no re-prompt, no escalation).

- Failure rate. The percentage of runs that fail outright or produce a hallucination, broken by failure type (instruction following, tool selection, retrieval accuracy).

- Trajectory metrics. The complete reasoning and execution path the agent takes, including intermediate steps, tool selections, and decision sequences. Useful for debugging.

- Latency and cost per run. Token consumption and end-to-end response time. Both are real budget considerations once agent volume scales.

The reliability picture from this data is sobering:

- Enterprise AI deployments show 60% success on single runs, dropping to 25% across eight runs.

- 89% of organisations have implemented agent observability;

- quality issues are the #1 production barrier at 32%.

- Gartner has projected that over 40% of agentic AI projects will be cancelled by the end of 2027, mostly because teams can’t measure them well enough to justify the spend.

What instrumentation this actually requires

If you only track click events, you can’t see any of this. Agent activity is backend traffic: API calls, MCP requests, task-completion events, conversation logs.

The minimum viable agent-stream instrumentation:

- Tag every API endpoint and MCP tool call as a tracked event with the calling agent identified (Claude vs. Cursor vs. internal agent vs. third-party).

- Add a “task completion” event class to your taxonomy, distinct from “feature interaction.” Task completion is the agent-side equivalent of an aha moment.

- Capture conversation entry points if you have an in-product agent. Where users start asking the agent for help is a high-signal indicator of unmet needs in the UI.

- Track resolution rate and failure rate per topic area. The topics where agents consistently fail are exactly the topics your product or onboarding hasn’t solved.

The product usage metrics evolution map: same questions, two different answers

The simplest way to translate your existing product usage frame to a 2026-ready one is to ask the same question for both streams.

| Question you’re trying to answer | Human-stream metric | Agent-stream metric |

|---|---|---|

| Who’s actively getting value? | Active users (with a meaningful action filter, not raw logins) | Active agent runs per account, weighted by task type |

| How fast can someone reach value? | Time to first meaningful outcome | Time to first successful agent task completion |

| Are users actually using this feature? | Feature adoption rate among active users | Agent invocation rate against the feature endpoint |

| Are they coming back? | Retention by cohort, week 4 / week 8 | Repeat agent task completions per account, week 4 / week 8 |

| Are they satisfied? | NPS, CSAT, in-app feedback | Resolution rate, conversation satisfaction, failure rate |

| Where are they getting stuck? | Funnel drop-off + session replay | Failure rate by topic + trajectory replay |

The questions are the same. The instrumentation is different. Most teams have built half of this and called it a day.

Why monitoring product usage across both streams is no longer optional

The temptation, especially for early-stage teams, is to treat agent-side measurement as something to figure out in 2027. That’s a mistake, and it’s a mistake for two reasons that compound each other.

Reason one: shipping has outpaced adoption

Yazan put this directly:

“As AI is also writing code, it’s helping engineers and product teams build features a lot faster. Instead of every quarter you’re releasing one or two features, now you’re releasing seven, eight, nine. What happens is now it becomes even harder for product teams to manually have to track each one and understand usage for each one and come up with hypotheses and insights on each one. So you definitely need to automate a lot of this. It becomes extremely necessary.”

This is the velocity-vs-adoption gap, and it’s wider than most teams realise. The PR-time data from Copilot deployments shows engineering moving four times faster. There is no equivalent productivity gain in product analytics. The result is a backlog of features shipped without a clear read on whether anyone is using them. If you’re not automating the analysis layer, you’re not closing this gap.

Reason two: agent traffic is already in your data, you’re just not seeing it

If your product has a public API, an MCP integration, or any backend access path, agents are already using it. Whether you’ve labelled their traffic or not, they’re firing events. They’re hitting endpoints. They’re consuming compute. And because most analytics setups treat backend events as “system noise” or filter them out as bot traffic, the result is: a meaningful and growing share of your real product usage is invisible to your dashboards.

Until you label agent traffic explicitly and start measuring its outcomes, you’re flying blind on the user type that’s growing fastest.

What to do about your product usage tracking this quarter?

Here’s what I’d prioritise, split by stream. None of this requires a roadmap rewrite. Most of it is instrumentation work.

Human stream: tighten what you already have

- Audit your activation milestone. Is it tied to a meaningful outcome (publish a first report, invite a first teammate) or to a setup task (complete profile)? Setup tasks predict nothing. Outcome milestones predict retention.

- Replace DAU/MAU with “active users with meaningful action.” Pick the one or two events that correlate with retention and report on the count of users who hit at least one of them per period.

- Run the funnel → session replay → survey diagnostic on every feature with low adoption. Funnel tells you where people drop off, replay tells you what they’re actually doing at that step, survey tells you why. This is the standard Userpilot triage and it works.

- Tag features by use case, not by functionality. Segment users who came in for “reporting” differently from users who came in for “collaboration.” Their adoption paths and retention curves are different products.

Agent stream: start instrumenting now, even at low volume

- Identify your agent surfaces. Which endpoints can an external agent already reach (public API, MCP integration, third-party connectors)? Which internal agents do you ship inside your product? Map these first.

- Add a “task completion” event class. For every agent-accessible endpoint, define what “success” looks like and emit that as a tracked event. Without this, you can count requests but not outcomes.

- Track topics and intent. If you have an in-product agent, group the conversations into topic categories. The high-volume topics tell you what users actually want from your product. The high-failure topics tell you what your product hasn’t solved yet.

- Watch failure signals like you’d watch churn. Frustration patterns (re-prompts, rage clicks, abandoned conversations) are the agent-stream equivalent of a low NPS score. Catch them before they turn into a support ticket or a cancellation.

- Connect the streams. Cross-reference low NPS scores from the human side with high agent-failure rates on the same accounts. The overlap tends to be tight, and acting on it cuts churn faster than chasing either signal alone.

How product usage analytics tools are evolving (and why this matters for your stack)

The product analytics market has spent the last year repositioning around exactly this two-stream problem. The clearest signal: in the last six months, the major web analytics platforms (Google Analytics among them) shipped MCP server access, letting teams query analytics in plain language from Claude, ChatGPT, or Cursor without ever touching a dashboard.

A wave of product analytics vendors followed, racing to add agent-side measurement on top of the human-side reporting they were built around. Specialised startups have appeared focused on the agent-side instrumentation problem alone. The pattern is unmistakable: product usage analytics is becoming a queryable layer that any AI tool can plug into, and the platforms that win the next two years will ship the agent-side measurement frameworks alongside the human-side ones they were built on.

The Userpilot AI Suite (coming May 2026)

I’m going to be direct about how Userpilot fits into this, because the timing is the point. The Userpilot AI Suite ships May 2026 in three connected pieces, and each piece maps to a specific part of the two-stream problem above.

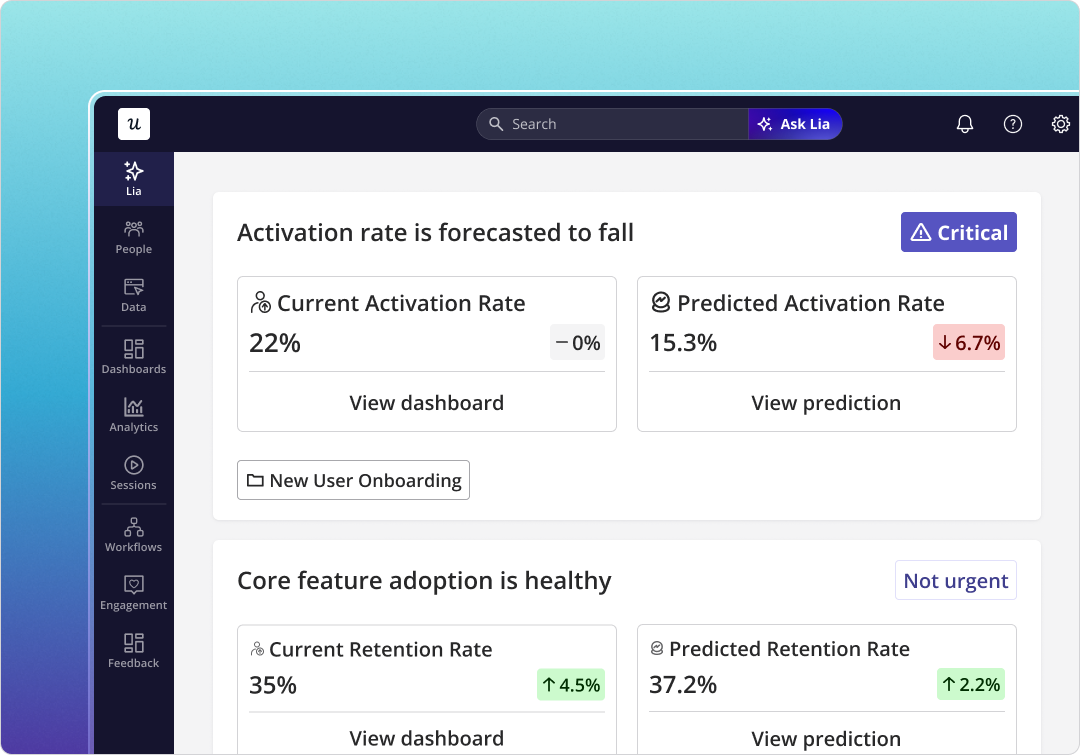

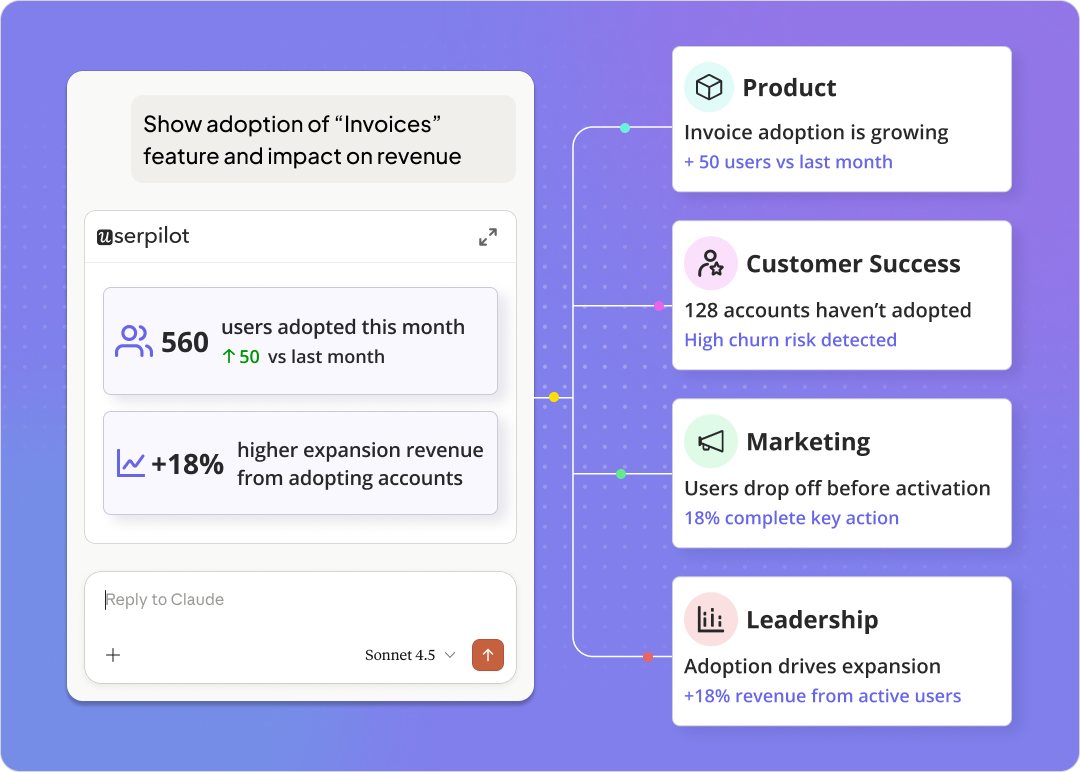

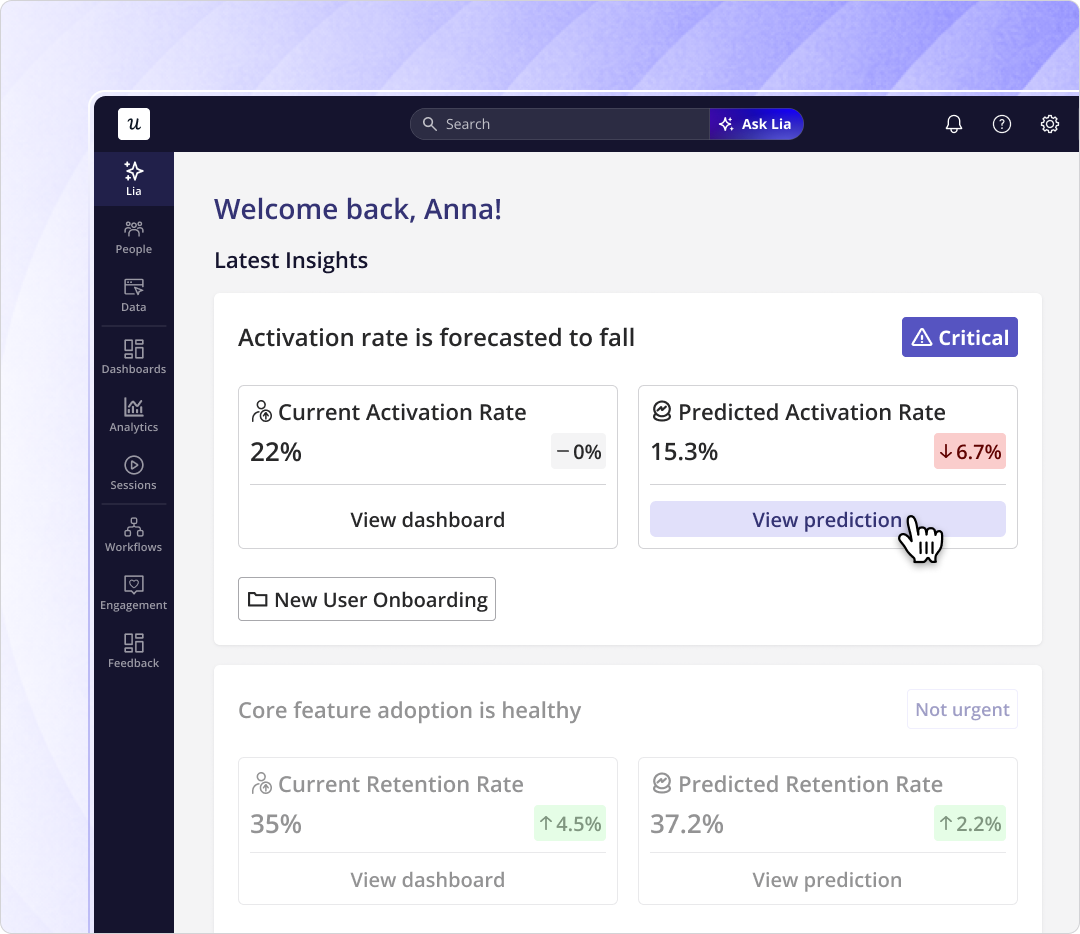

Lia (the always-on AI agent and chatbot). Lia is the answer to the velocity-vs-adoption gap. You set up a project around an outcome (retention, monetisation, activation, churn), and Lia monitors it 24/7, surfaces what’s pushing the metric up or putting it at risk, gives you reasoning behind every insight with recommended next steps, and can build the in-app fix and launch it with as much autonomy as you give her. Lia runs in two modes: an autonomous always-on agent that monitors your product without input, and a chatbot you can ask anything in plain language. Phase 1 (reactive analytics + chat) ships May. Phase 2 (proactive monitoring + autonomous in-app content creation) is fast-follow.

Our CEO described the design choice this way:

“You change from an operator to monitoring. You’re no longer operating. The AI is operating. You’re just basically evaluating and monitoring the agent workflow. You go to Lia and say, my goal is to improve trial-to-paid conversion. You start that as a project. Lia goes, gets all the data, builds the reports around the goal, builds the dashboards for you, and then comes up with actionable insights to tell you why people convert, why people don’t convert, and what you need to do.”

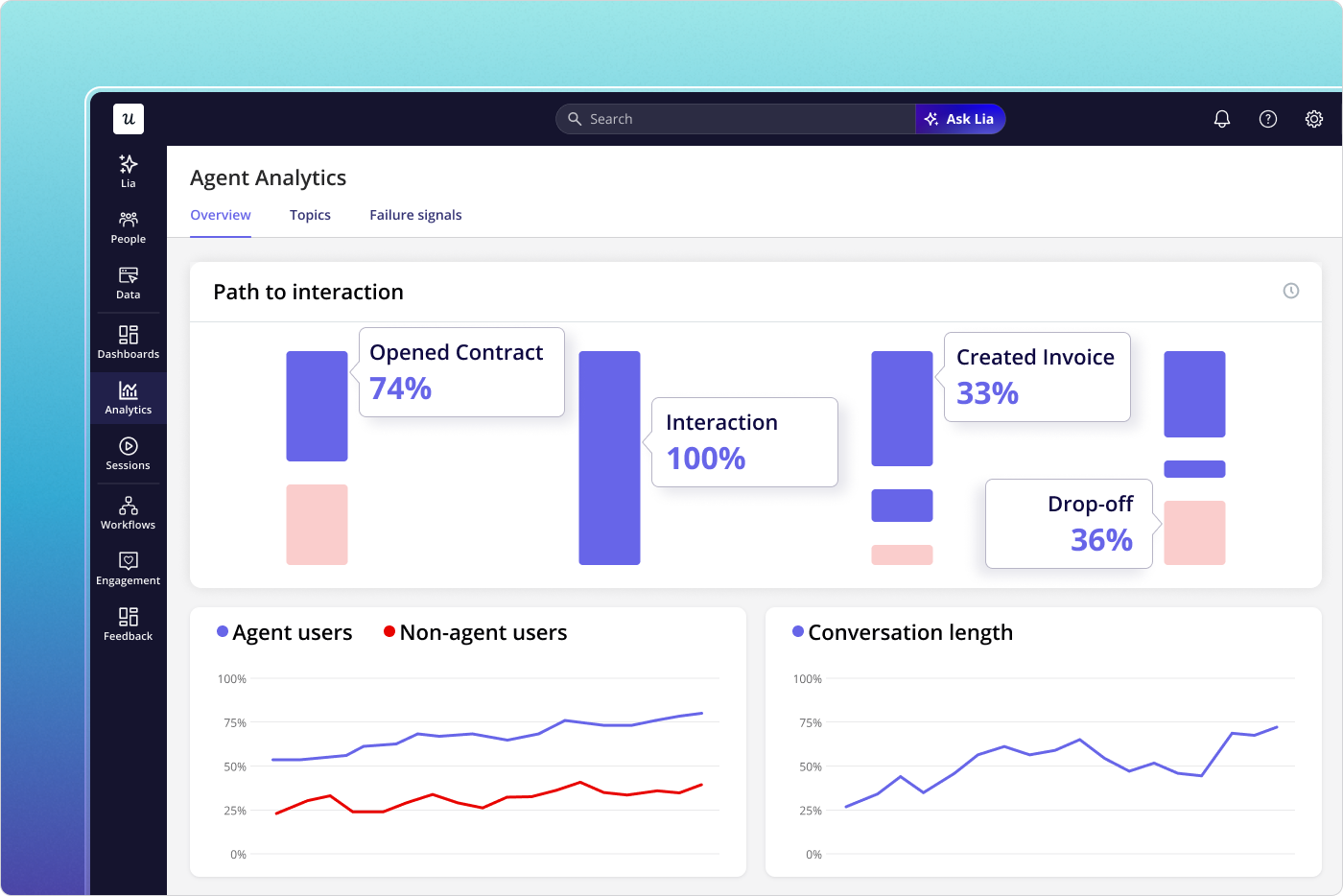

AI Agent Analytics. This is the agent-stream measurement layer applied to AI agents inside your product. Three analytical layers: Usage Overview (who’s using the agents and what’s the impact on retention and revenue), Topics & Intent (what users are actually asking, including high-frequency pain points and product gaps), and Failure Signals (where agents are frustrating users, including frustration detection and resolution failures by topic). Eight metrics tracked: conversation volume, queries per user, resolution rate, satisfaction score, conversation entry points, top asked questions, retention rate, and failure rate. AI Agent Analytics ships early next quarter.

MCP Server. This is the connectivity layer. The Userpilot MCP server connects your product usage data directly into Claude, ChatGPT, Cursor, Copilot, or any MCP-compatible tool. Marketing, sales, CS, and leadership get the same product context that used to live only with PMs, inside the tools they already use. Yazan sees this as a cross-team democratisation move: finance teams that have never logged into Userpilot will be able to ask a foundational model about product usage in plain language and get answers grounded in real data, not guesses. The MCP Server ships with Lia Phase 1 in May.

Together, the three pieces are designed to handle both streams of product usage from the same security foundation (SOC 2, GDPR, HIPAA aligned).

Where product usage measurement is heading

The two problems this article opened with (shipping velocity outpacing adoption, and agent traffic going unmeasured) are not getting easier. They’re getting harder. Here’s where I think this is going over the next 18 months.

Both streams collapse into a single decision layer

Right now, most teams have human-stream tooling and are starting to add agent-stream tooling on top. Within 18 months, the unified decision layer (which metrics matter, which features are working, which accounts are at risk) will need to read from both streams equally. The companies that get there first will run their product strategy on a complete view of usage. Everyone else will be trying to draw conclusions from half the data.

Predictive replaces descriptive

The traditional analytics workflow (something happens, you notice, you investigate, you act) takes days or weeks. Agentic-era analytics compresses it: anomaly detection flags issues before you notice them, pattern recognition surfaces actionable insights without manual queries, and predictive models identify churn risk before it’s behaviourally visible. Yazan frames the shift simply: instead of spending hours going through reports and session replays, a PM’s job moves toward strategic thinking and problem discovery. The monitoring becomes the AI’s job.

The competitive moat shifts to context

If every analytics tool eventually exposes its data through MCP, then the differentiator is no longer “what data can I query?” but “how well does the AI understand my product?” The teams whose analytics layer has been trained on their actual product structure, their event taxonomy, their customer history, and their lifecycle definitions are the ones whose AI agents will produce useful answers. Generic AI on top of unstructured data hallucinates. AI grounded in product context surfaces signal.

That’s the shift. Product usage data has been important for years. In the next 18 months, it becomes the competitive layer.

Bottom line

If you take one thing from this: stop treating product usage as a single stream of human behaviour. There are two streams now, and the gap between them is going to widen for at least the next two years.

The teams that instrument both, build the predictive analysis on top, and connect their product data to the AI tools their teams actually use are going to ship faster, retain better, and read their own product more accurately than anyone running last decade’s analytics setup.

If you’re at Userpilot already, the MCP Server and Lia ship in May. If you’re not, book a demo and we’ll walk you through how the AI Suite handles both streams from one platform. And whether you build it on Userpilot or on something else, start measuring agent usage this quarter. The data you don’t capture now is the diagnostic gap you’ll be paying for in 2027.

Read related blog posts:

- product analytics in 2026 (the full hub)

- product-led growth in the AI era

- in-app surveys done honestly

- or how the broader growth motion shifts in our breakdown of growth hacking vs. growth marketing.