Product roadmap in AI Era: from delivery plan to decision system

I’ve watched product managers defend a 12-month product roadmap like their life depened on it, then spend most of Q3 explaining why nothing from Q1’s plan survived contact with reality.

I’ve also sat in planning sessions where the roadmap document was 40 slides and nobody in the room agreed on what it was actually for.

In 2026 – things have changed and most product roadmap processes haven’t caught up with.

AI (or rather “vibe coding”) has really narrowed the gap between “we should build that” and “it’s live”.

We’re basically shipping new features at an unprecendented rate – which means bad prioritization and weak discovery now compound at a speed the old annual planning cycle wasn’t designed to handle.

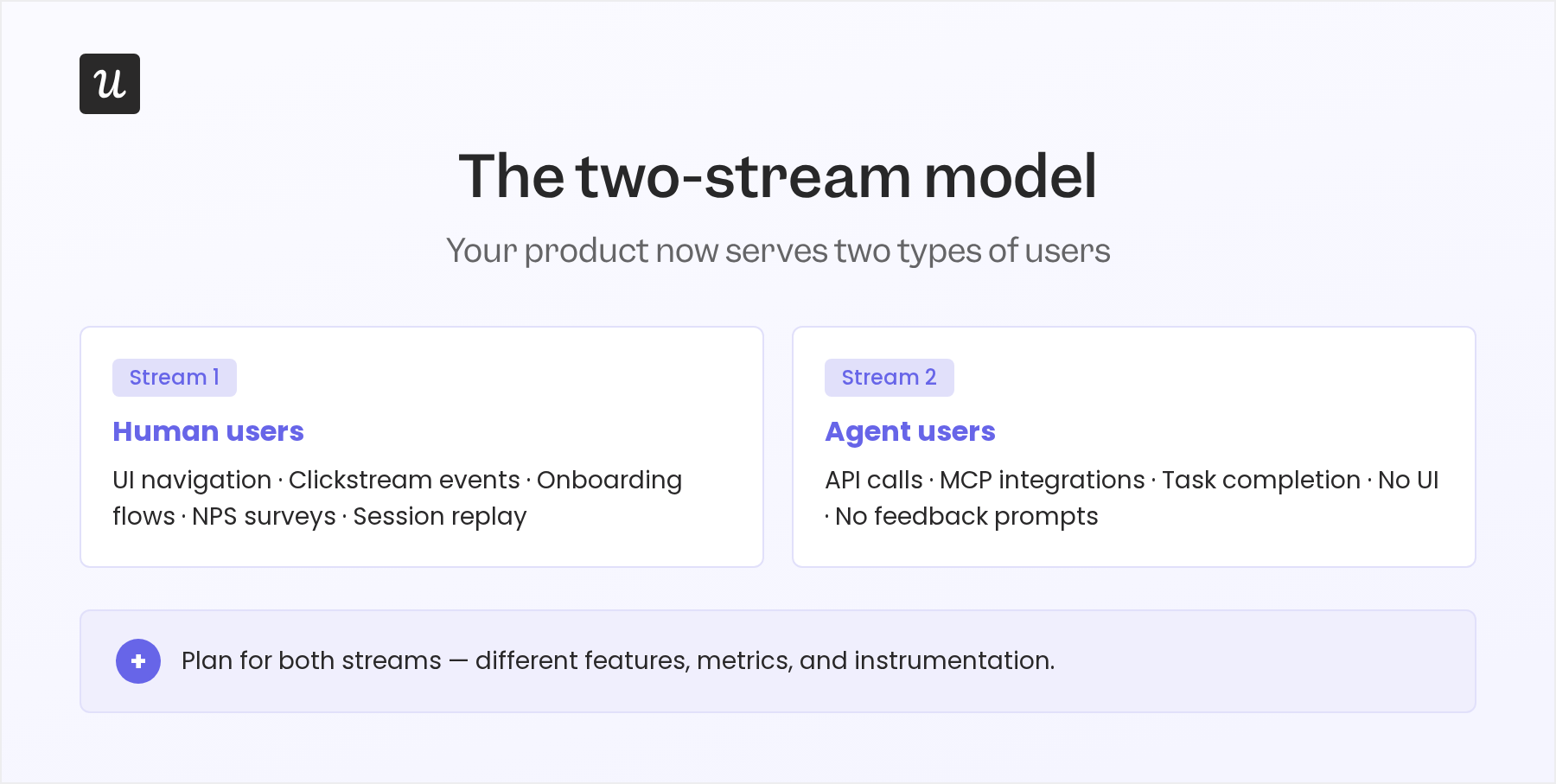

Then – anothing thing has changed: a new user has entered the game: AI agents are beginning to use SaaS products directly, through APIs and protocols like MCP, without ever opening a UI.

They don’t onboard through tooltips, trigger clickstream events, or send NPS responses. Most roadmaps I see are still planning for them not to exist. That’s a problem that compounds.

So – instead of a regular “product roadmap guide” for beginners – I wanted to write about how these two shifts – vibe coding and agentic users – affect how we should be doing product roadmapping:

- How we should be planning for human users and AI agent users aka “the two-stream user model”;

- A quarterly bet structure that replaces this false sense of precision with assumptions that we explicitly know will need to be tested

- New metrics for agent-era products that traditional clickstream product analytics can’t capture

- The operating cadence that keeps a product roadmap realistic without thrashing it weekly

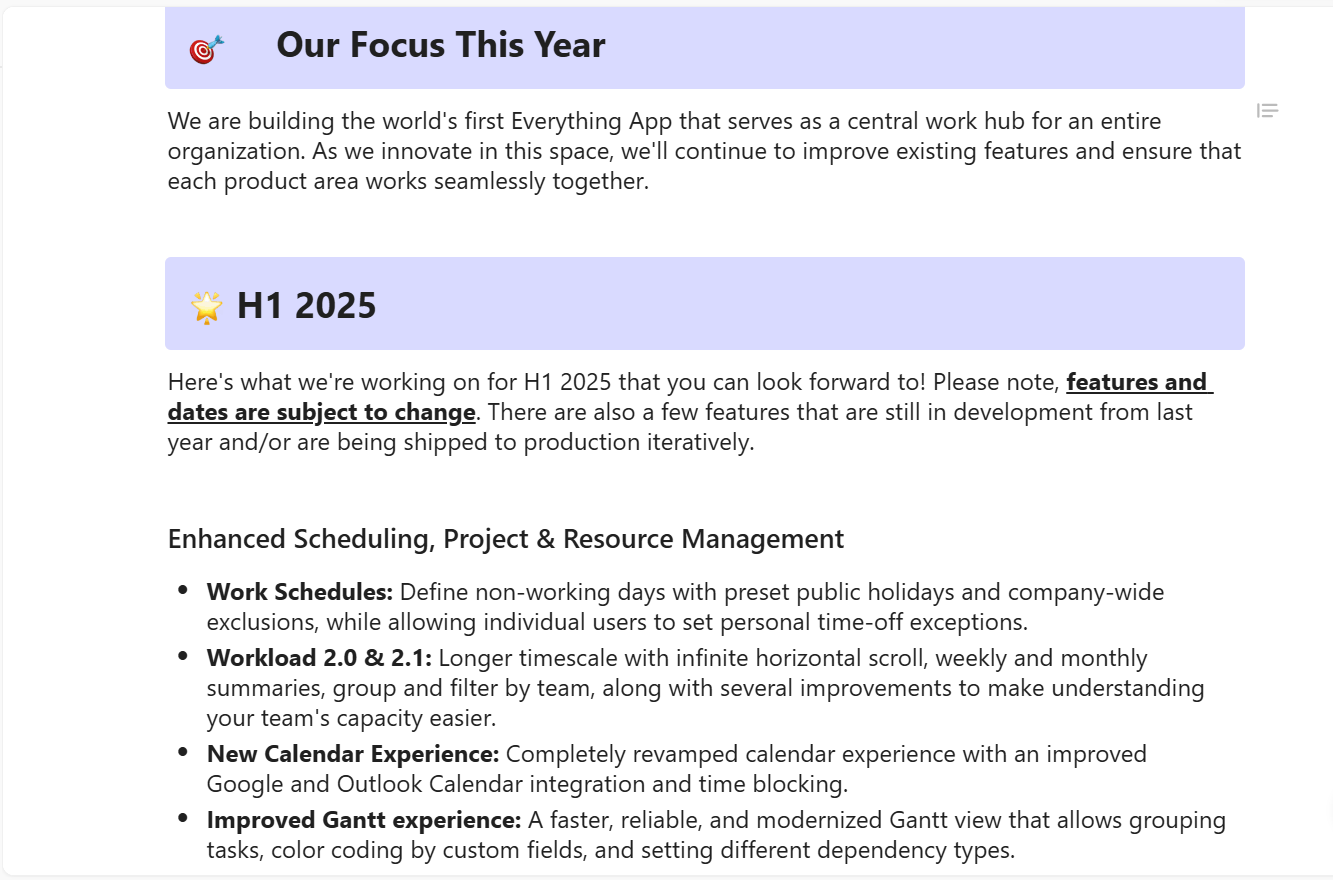

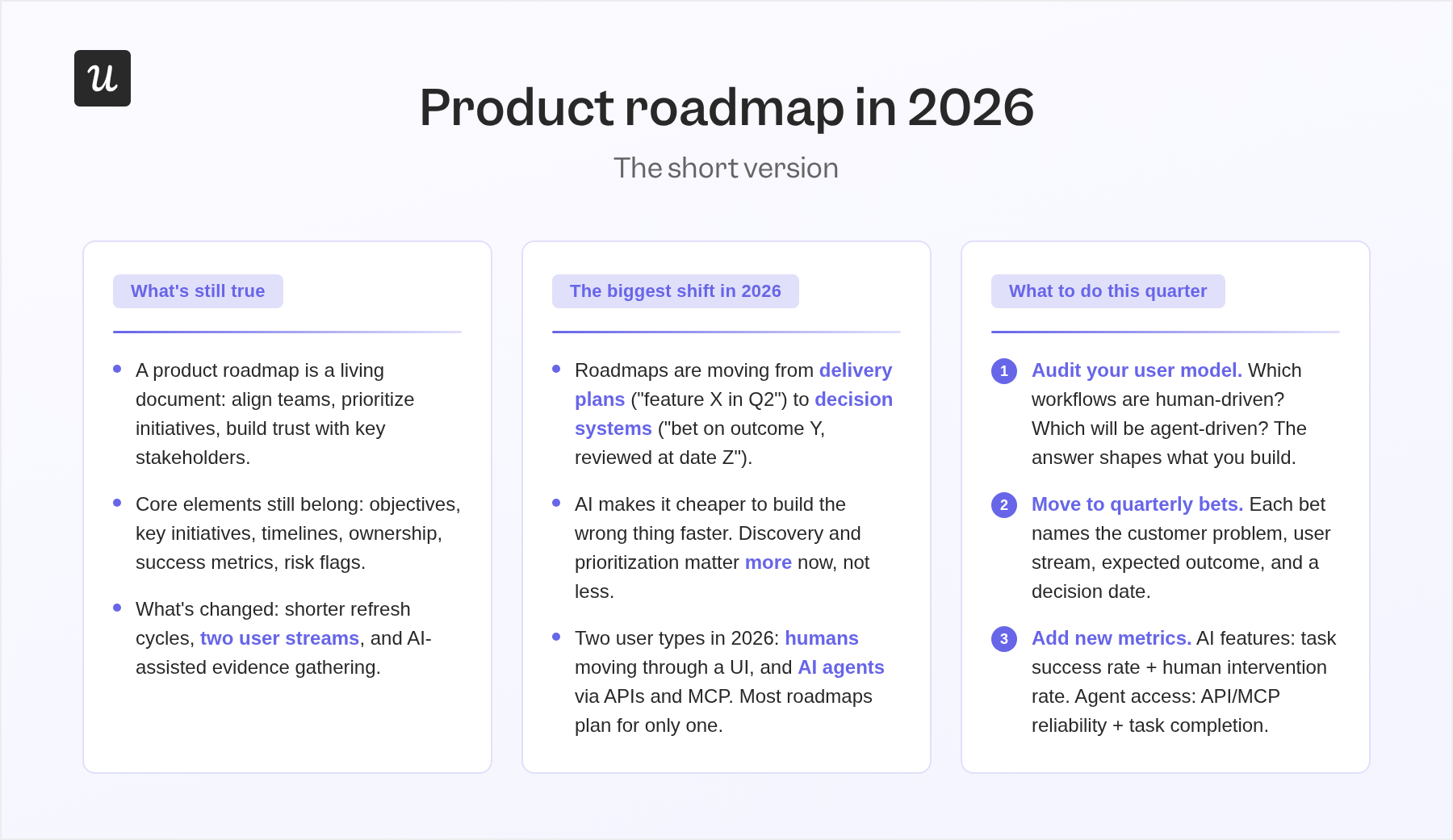

The short version, if you’re in a hurry:

What’s still true about product roadmaps

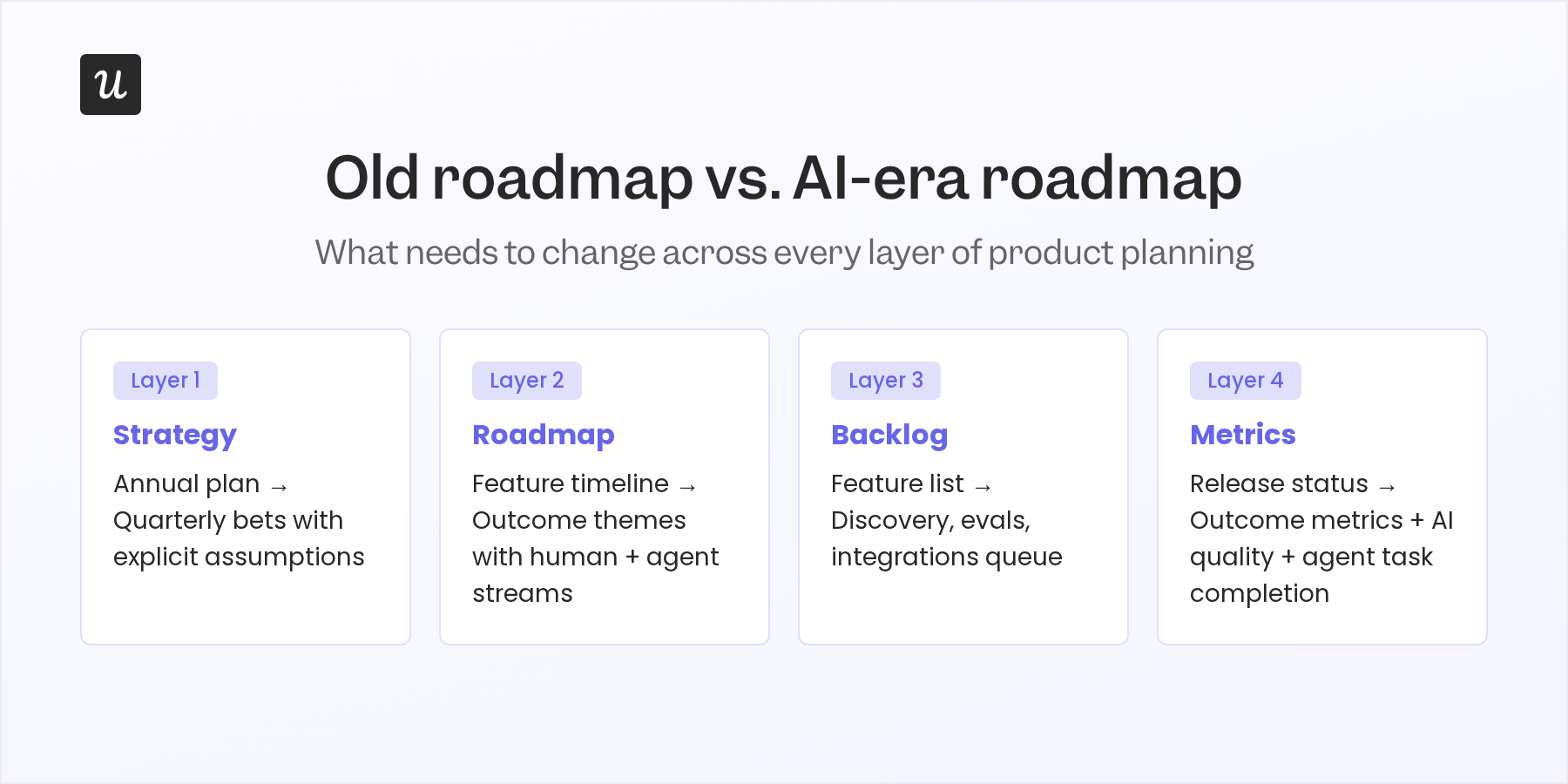

- A product roadmap is a living document that communicates your product’s vision, strategic direction, priorities, and progress. It serves to align teams, prioritize initiatives, and build trust with key stakeholders.

- The core elements (objectives, key initiatives, timelines, ownership, success metrics, risk flags) still belong on every roadmap.

- What’s changed is the operating model around it: shorter refresh cycles, two user streams, and AI-assisted evidence gathering.

The biggest shift in 2026

- Roadmaps are moving from delivery plans (“feature X in Q2”) to decision systems (“bet on outcome Y, with these assumptions, reviewed at date Z”).

- AI makes it cheaper to build the wrong thing faster. That’s why discovery and prioritization matter more now, not less.

- Your product has two user types in 2026: humans moving through a UI, and AI agents accessing your product through APIs and MCP integrations. Most roadmaps are built for only one.

What to do this quarter

- Audit your user model. Which workflows are human-driven? Which are, or will be, agent-driven? The answer shapes what you build and how you measure it.

- Move to quarterly bets with explicit assumptions. Each bet names the customer problem, the user stream, the expected outcome, and a decision date.

- Add two new metric categories. For AI features: task success rate and human intervention rate. For agent access: API/MCP reliability and task completion rate.

What a product roadmap actually is

A product roadmap is a shared source of truth that communicates your product vision, strategic direction, and priorities over time.

It’s not a sprint backlog or a project plan: those explain the what and how of specific development work, while a product roadmap covers the what and why behind product decisions.

Think of it as the country map showing where you’re going, while a project plan is the city blueprint.

5 types of product roadmaps

One reason roadmap conversations get messy is that “product roadmap” means different things to different audiences. Executives need strategic direction and business goals; engineers need specific features, milestones, and release context; customers need confidence about what’s coming without being held to delivery dates you haven’t committed to internally.

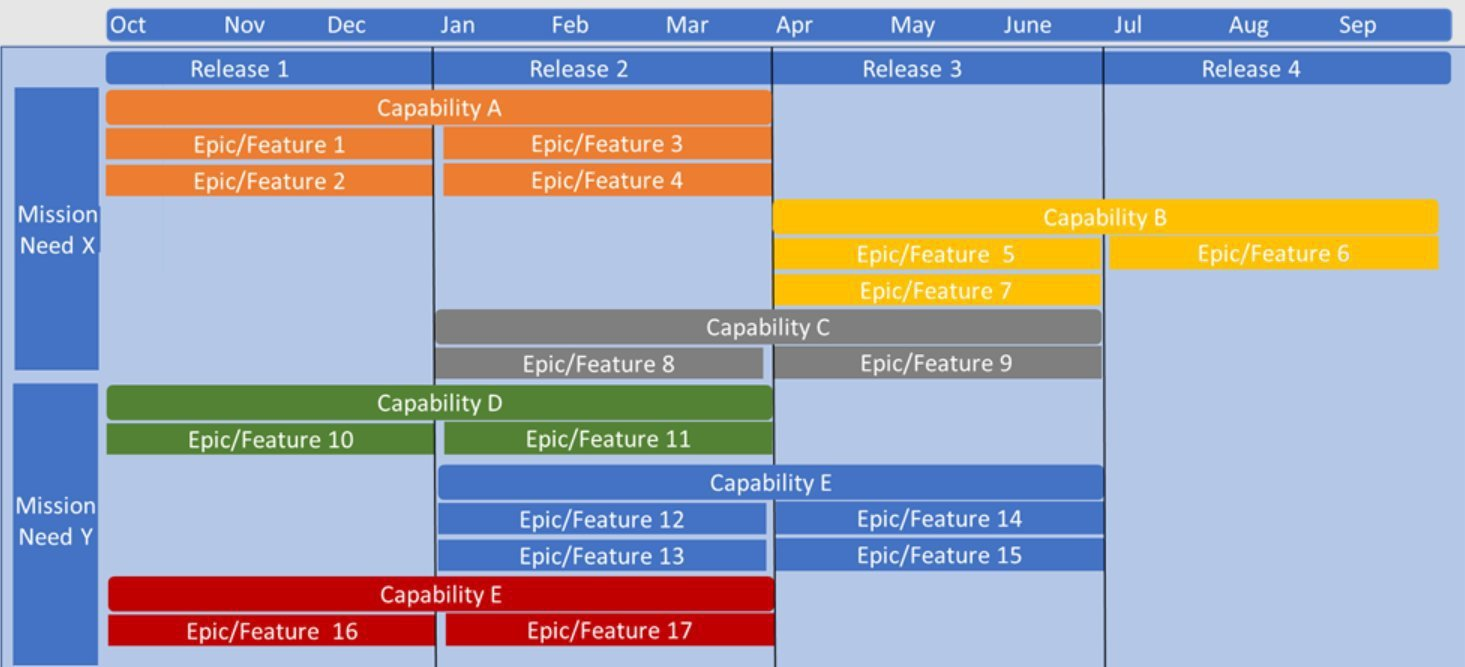

| Roadmap type | Good for | When to use | Example use case |

| 1. Timeline-based | Launch planning | Fixed dates and deadlines matter | Release plan for a new payment gateway |

| 2. Kanban-style | Agile teams | Priorities shift frequently | Visualizing backlog → in progress → done |

| 3. Goal / OKR-linked | Execs, quarterly planning | Tying features to business outcomes | Feature tied to “+10% retention” goal |

| 4. Feature-based | Customers, sales enablement | Communicating “what’s coming” | Showcasing integrations in development |

| 5. Now / Next / Later | PLG teams, simplicity | Flexible, low-maintenance view | Showing live features vs. future bets |

In fast-moving product areas, I’d push hard for flexible timeframes (Now/Next/Later or quarterly buckets) over hard deadlines. The more specific the date, the more you’ve turned a strategic document into a promise you’ll probably have to walk back.

Ok, but if shipping velocity has increased so much with AI – and uncertainty is so profound (as Grant Lee (CEO of Gamma) wrote in his op-ed “Everything is uncertain”) – do we even need roadmaps at all?

Well I think we do.

Why product teams still need roadmaps

1. Alignment between your product, engineering, marketing, sales & CS teams

The most common thing I hear when roadmaps break down is “everyone was pulling in a different direction.”

That’s an alignment failure, and a product roadmap is the fix – especially in the AI era where it’s easier to ship than to think what actually should be built in the first place!

When the entire team (product, engineering, customer success, marketing, sales) can see what’s being built and why, they stop duplicating effort and stop making conflicting promises to customers

2. Prioritization needs to be a system – and a good product roadmap helps keep it on track

ProductPlan’s 2025 State of Product Management Report found that 54% of product managers primarily track features and releases rather than business outcomes; that number climbs to 70% under executive pressure.

That’s the feature factory pattern, and it’s what a focused roadmap is supposed to prevent.

Without one, every stakeholder thinks their request is the most important thing, and the PM becomes a tiebreaker instead of a strategist. A good product roadmap is supposed to (well, execution still varies) prevent these ad-hoc “throw ins” and keep everyone on course.

3. Stakeholders need to see the reasoning, not just the list of features

When priorities shift (and they always will), a product roadma shows stakeholders why certain decisions were made.

It communicates that decisions have rationale in user research/discovery, and were not arbitrary.

I’ve seen teams lose a year of stakeholder trust because they changed direction without explanation.

The biggest 2026 shift: from delivery plan to decision system

Here’s the argument I’d make: the traditional product roadmap was built around one constraint, and it was the wrong one.

When engineering velocity was the bottleneck, it made sense to plan for the year ahead, commit to features, and then simply execute.

That model worked when customer feedback arrived in annual cycles and “shipping” was genuinely hard.

Neither condition holds anymore. McKinsey’s research on AI in software development confirms that top-performing companies see real productivity gains only when they rethink workflows and operating models, not when they just hand teams AI tools. The roadmap is part of that rethink.

The shift I’d push for is from a feature timeline to a portfolio of bets. Each bet names a customer problem, a target segment, a confidence level, explicit assumptions, and a decision date: a point when the team asks whether to kill, continue, or expand.

This matters because AI can make a team look productive while they’re building the wrong things. Shipping is no longer the scarce resource. Product judgment is.

I’m not arguing that long-term thinking is dead. Companies with stable infrastructure, regulated commitments, or enterprise customer dependencies still need delivery precision at certain layers.

What should die is this sense of a false precision: committing to feature-level plans 12 months out when neither the user needs nor the AI tooling landscape will look the same by then.

What you can commit to in longer time horizons is the user outcomes – which don’t change. But the feautres that deliver those outcomes can change any time – depending on how the technology evolves.

Ok so we’ve discussed the impact of vibe coding and AI-induced increase in shipping velocity on product roadmapping – but what about the agentic users?

The two-stream model: planning for human and agent users

Most PMs I talk to haven’t registered this yet, and I think it’s the most important change in product planning right now:

Products in 2026 have two types of users: human users who log in, click through, onboard, and give feedback, and agent users that call APIs, retrieve data, execute tasks, and may never interact with a single screen your design team built. Kind of sad, I know 🥲

This isn’t science-fiction anymore: Anthropic introduced MCP as an open standard for secure connections between data sources and AI-powered tools, and OpenAI added remote MCP server support to its API in 2025. Agent-to-tool access is mainstream now.

Our CEO, Yazan Sehwail, told me what this actually means for product teams in a recent conversation:

“If you as a user, say as a marketer wanted to see e.g. NPS data, user survey data, or any specific product usage data – you’re able to get your answer without having to go to Userpilot, without having to pull data and upload it to someone. So this is why MCP is gonna be a game changer.”

For product planning, this changes things quite dramatically.

A product roadmap built only for human users will miss an entire class of usage, fail to instrument the right things, and produce metrics that look fine while the product fails for half its traffic.

The real product roadmap question stops being “what “features”, screens and workflows should we build?”- It also has to ask which capabilities to expose to agents, what permissions and audit controls are needed, and how to measure successful agent usage when there are no click events to track.

I’d argue “API strategy” and “agent strategy” are no longer developer platform work.

They belong in core product planning, sitting alongside every other initiative with their own success metrics and ownership.

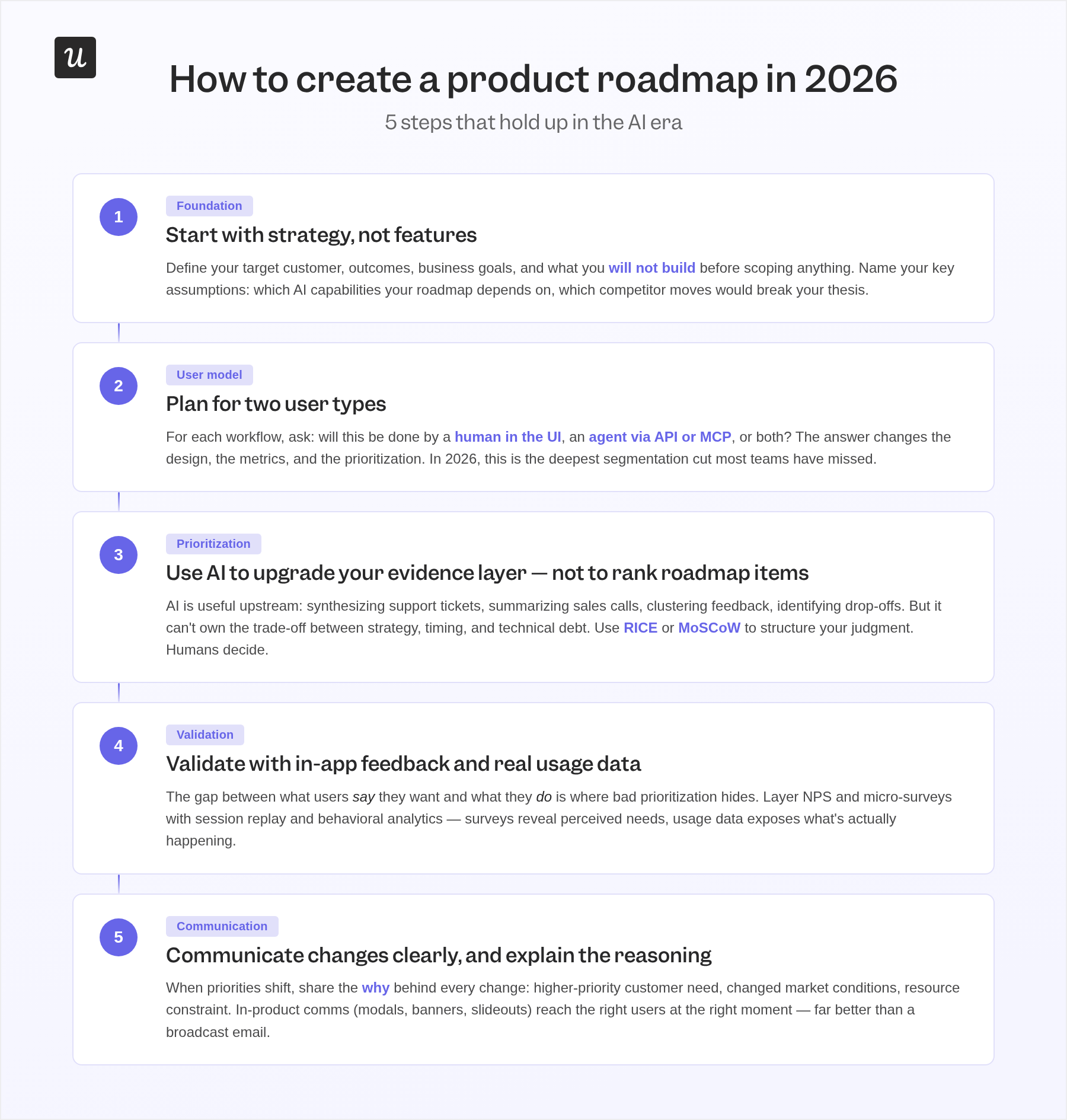

How to create a product roadmap in 2026

1. Start with strategy, not features

I see too many roadmaps that start with a list of features and work backward to your product strategy.

But your roadmap should be the output of a strategic decision, not the starting point. That means defining your target customer, the outcomes they need, the business goals you’re driving toward, and, critically, what you deliberately will not build before a single initiative gets scoped.

Take Slack.

Their roadmap decisions weren’t driven by feature requests for better chat.

They were driven by the strategic objective of removing friction from scattered workplace communication. That north star shaped what they built and, more importantly, what they declined to build. In the AI era, this step also means naming your key assumptions: which AI capabilities your roadmap depends on, which competitor moves would break your thesis, and which user behaviors you’re assuming will hold.

Write them down. A roadmap built on unstated assumptions surprises you every quarter.

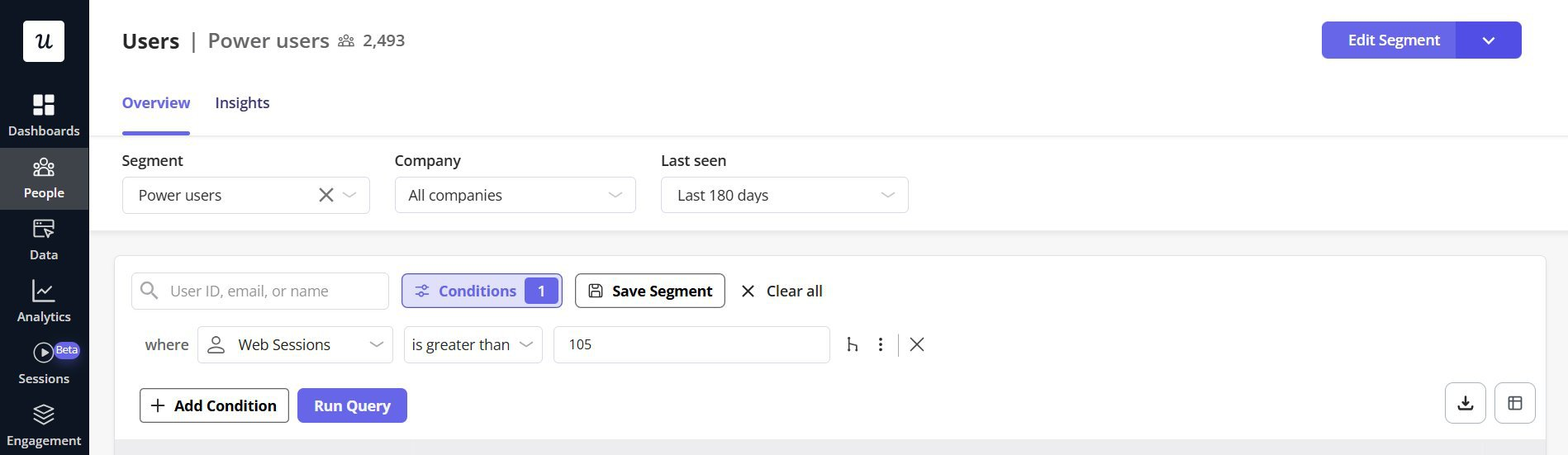

2. Plan for two user types

For each major workflow in your product, ask: will this be done by a human in the UI, by an agent through an API or MCP integration, or by both? The answer changes the design, the metrics, and the prioritization.

User segmentation has always shaped good roadmaps: new users need different things than power users, admins from end users. In 2026, the deepest segmentation cut is human user versus agent user, and most teams haven’t added it yet.

3. Use AI to upgrade your evidence layer, not to make decisions

The mistake I keep seeing is teams asking AI to rank roadmap items; that’s going to give you prioritization that is way too shallow.

Using AI to simply count & cluster feature requests ignores a lot of tacit knowledge that best context engineering won’t provide: strategy, timing, technical debt, competitive positioning, hours of conversations with users, tacit knowledge of the market and “vibes”. And human empathy – understanding your users on a visceral level (ideally because you are one of them!)

AI is genuinely useful upstream (synthesizing support tickets, summarizing sales calls, clustering feedback themes, identifying usage drop-offs, mapping competitive gaps) but it can’t really things and your business context.

Sachin Rekhi, a well-known PM practitioner, argues that great roadmaps are “as much art as science,” and that simple request-counting misses differentiation and nuance.

So – use AI to collate all the signals for you and give you the best possible evidence base; but then make the call yourself.

Prioritization frameworks like RICE (Reach, Impact, Confidence, Effort) or MoSCoW (Must-Have, Should-Have, Could-Have, Won’t-Have) are useful for structuring that judgment, but they’re tools – not answers.

One specific use of AI that genuinely improves roadmapping: continuous signal monitoring.

Instead of waiting for quarterly planning to surface problems, AI can flag usage anomalies, support spikes, or post-launch drop-offs before they show up in NPS. That shifts planning from reactive to informed, which is a meaningful operational upgrade.

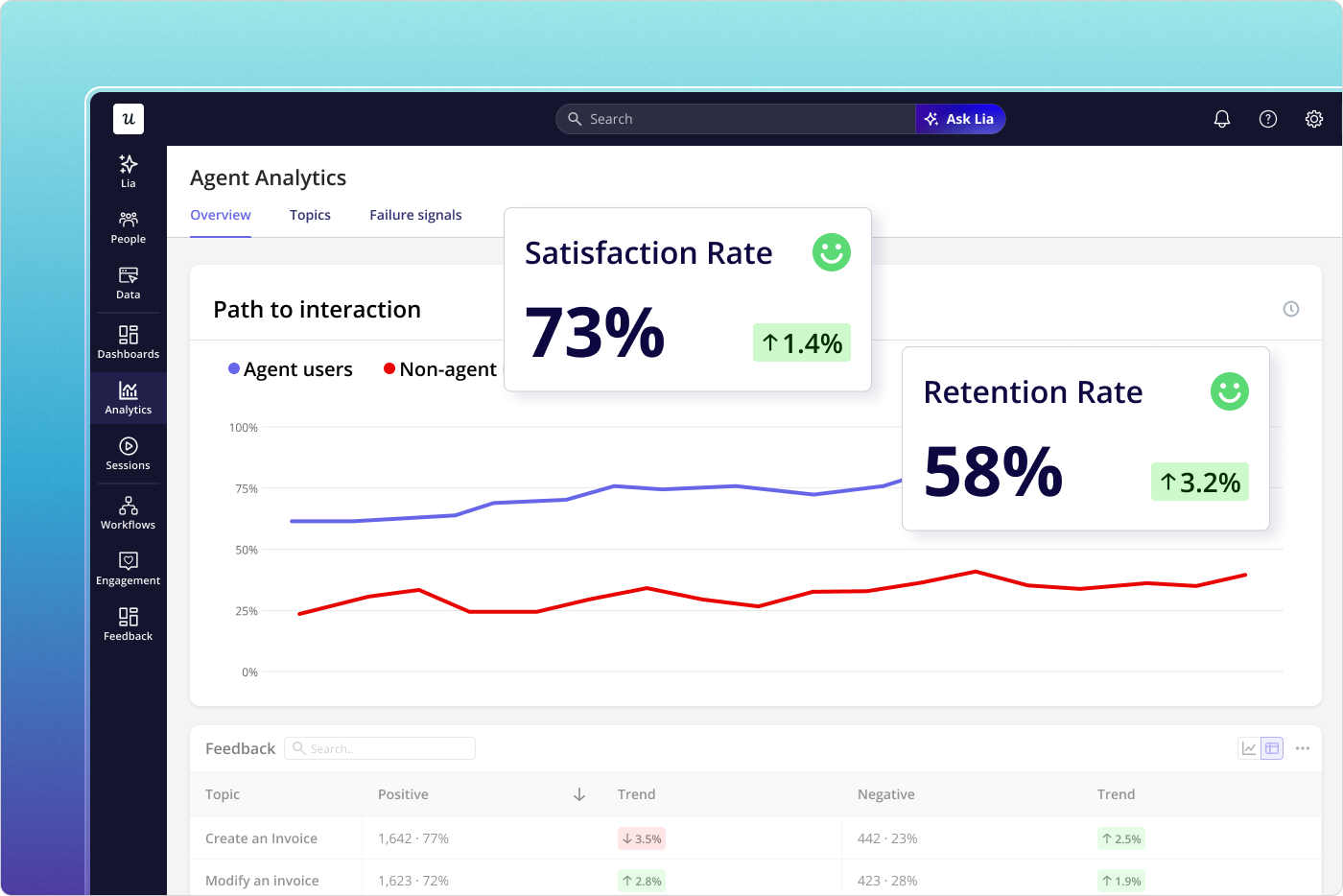

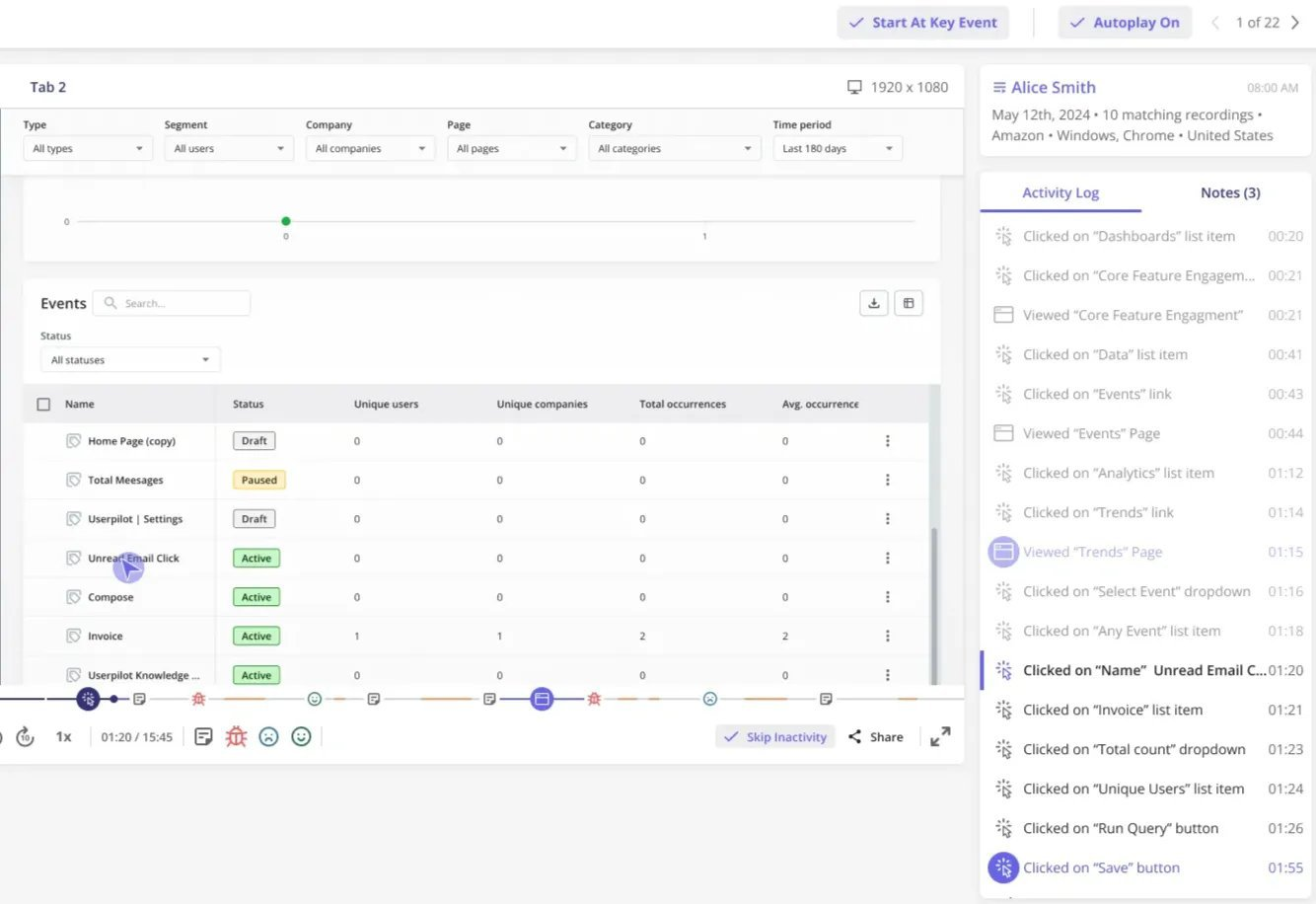

4. Validate with in-app feedback and real usage data

Even solid user interviews and sales call synthesis aren’t enough on their own. The gap between what users say they want and what they actually do in the product is where bad prioritization hides. When we launched Userpilot’s email onboarding feature, the funnel showed a sharp drop-off at domain verification.

Users were requesting more visualization options, but session data showed a setup problem, not a feature gap. Within a few hours of spotting this through session replay, I built a checklist and tooltip directly in Userpilot to surface the correct steps. Drop-off closed within days, no engineering ticket required.

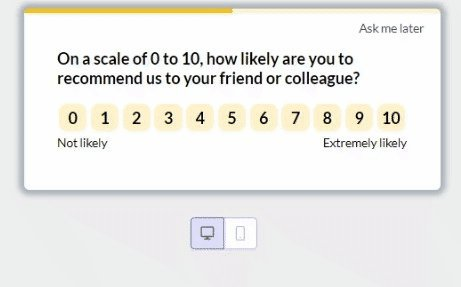

Layer NPS and micro-surveys with behavioral data so you have both signals. Surveys reveal perceived needs; usage data exposes what’s actually happening. Together, they make prioritization conversations concrete: you’re not debating opinions, you’re debating what the evidence shows.

5. Communicate changes clearly, and explain the reasoning

When priorities shift, features get cut, and timelines change, the question isn’t whether to communicate; it’s how to do it without eroding trust. The teams I’ve seen handle this well share the “why” behind every change, not just the change itself: higher-priority customer need, changed market conditions, resource constraint.

When stakeholders understand the reasoning, they can stay on the same page even when the plan shifts.

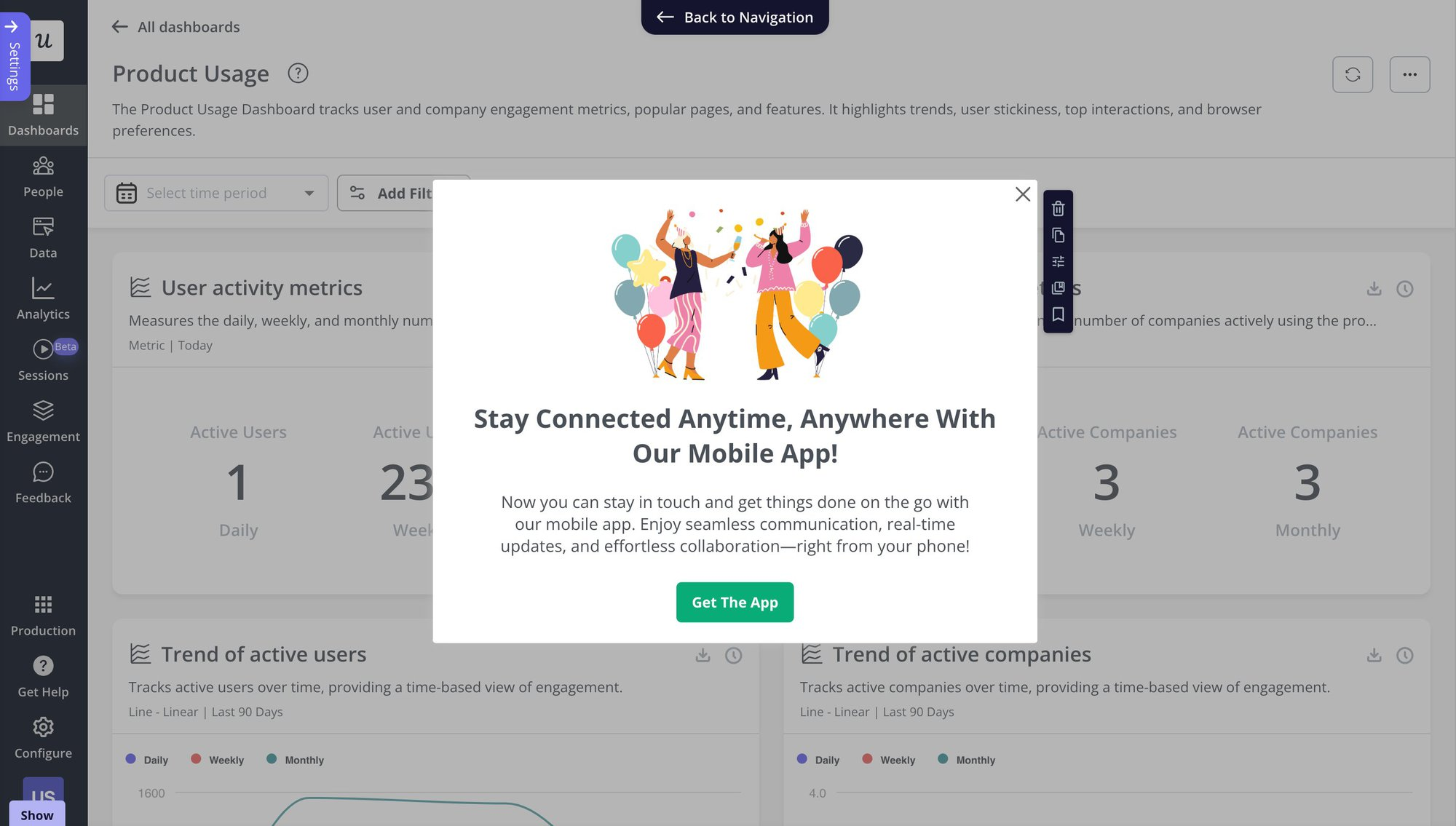

In-product communication works better than email for updates that affect users directly. Modals, banners, and slideouts can reach specific user segments at exactly the right moment: notifying only users who log in frequently on mobile when you launch a mobile feature, rather than broadcasting to everyone and creating noise.

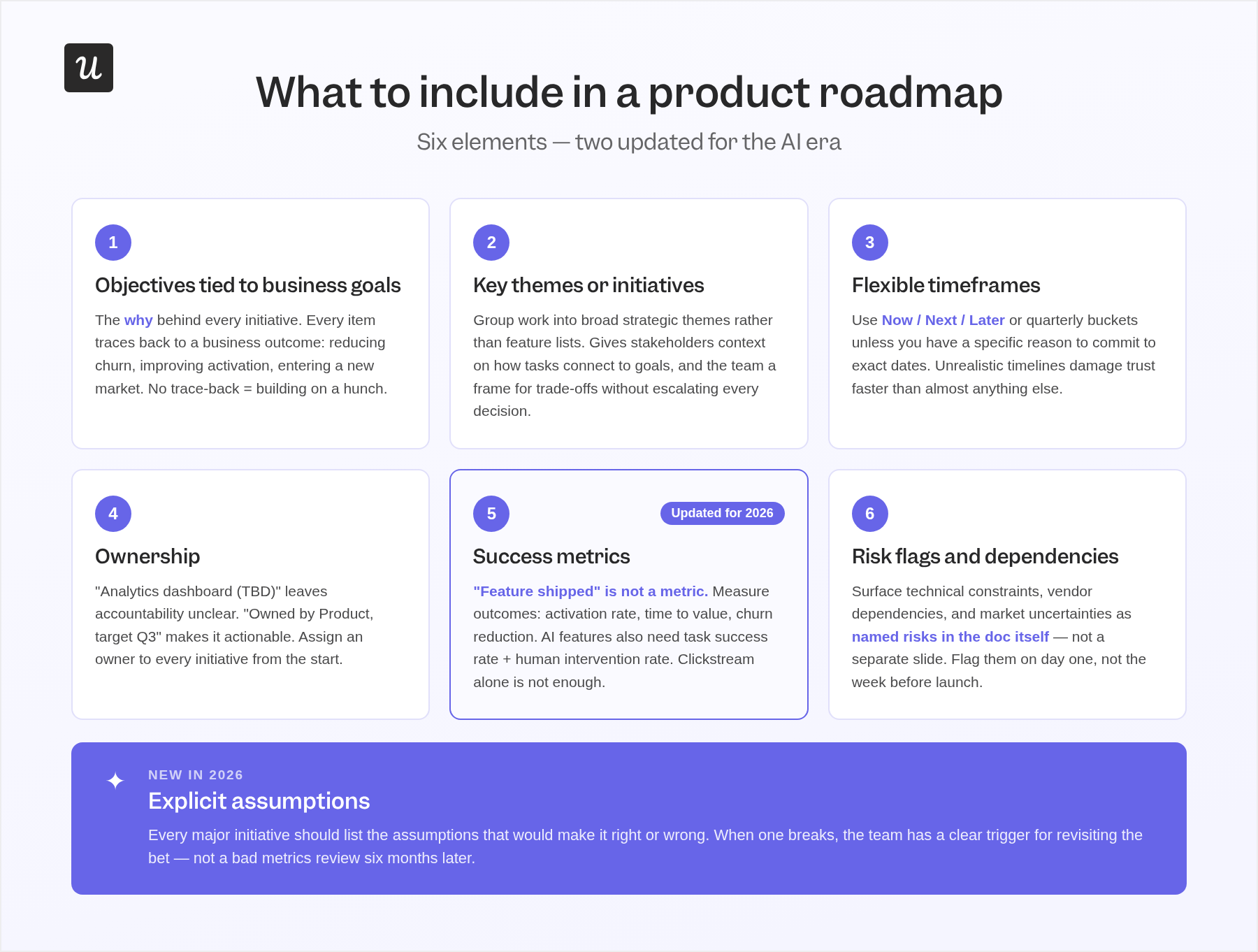

What to include in a product roadmap

Six elements belong on every roadmap. In 2026, two of them need updating to account for the AI context and agentic users:

1. Objectives tied to business goals

Objectives are the “why” behind every initiative: the business outcomes you’re pursuing, like reducing churn, improving activation, or entering a new market. Every item on the roadmap should trace back to one. If it doesn’t, that’s a signal you’re building on a hunch, not a strategy.

2. Key themes or initiatives

Group work into broad strategic themes (“strengthen activation,” “agent access layer”) rather than listing individual features in isolation. This gives key stakeholders the context they need to understand how specific tasks connect to company goals, and gives the team a frame for making trade-off decisions without escalating every conflict to leadership.

3. Flexible timeframes

Use broad categories (Now/Next/Later or quarterly buckets) unless you have high confidence and a specific reason to commit to exact dates. I’d rather under-promise on timing and over-deliver than build a roadmap that credibly lands for two months and then collapses. Unrealistic timelines damage trust faster than almost anything else.

4. Ownership

“Analytics dashboard (TBD)” leaves accountability unclear; “Analytics dashboard, owned by Product, target Q3” makes it actionable. Assign an owner to every initiative from the start, because roles shift and clarity doesn’t survive ambiguity.

5. Success metrics (updated for 2026)

“Feature shipped” is not a success metric. The right metrics measure outcomes: activation rate, time to value, expansion revenue, churn reduction.

AI features need two additional standard metrics: task success rate and human intervention rate. Agent-accessible features also need API reliability and task completion rate. Clickstream alone won’t tell you whether an AI-era feature is working.

6. Risk flags and dependencies

Surface technical constraints, cross-team dependencies, external vendor timelines, and market uncertainties early, as named risks in the roadmap document itself, not in a separate “concerns” slide that nobody reads. A SaaS billing platform planning multi-currency support should flag its dependency on third-party payment providers as a known risk from day one, not an unwelcome surprise the week before launch.

What’s new in 2026: explicit assumptions. Every major initiative should list the assumptions that would make it right or wrong. When one breaks, the team has a clear trigger for revisiting the bet rather than discovering the problem through a bad metrics review six months later.

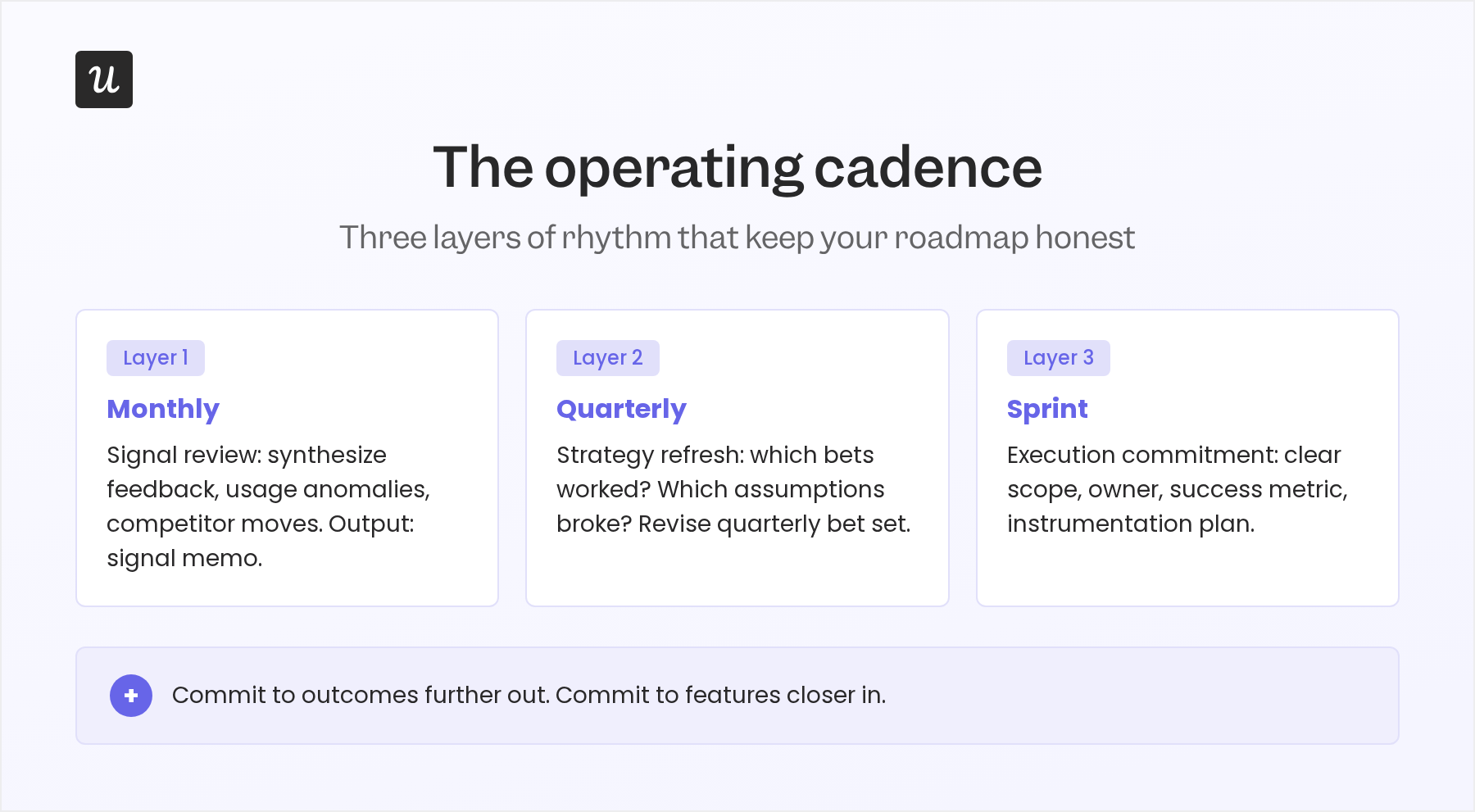

The operating cadence that keeps your roadmap *realistic*

A roadmap without a review cadence turns stale within weeks.

The thing I’ve found that keeps it realistic is three layers, not one:

Monthly signal review:

Use product analytics, support summaries, sales call themes, and feedback clusters to produce a short signal memo: top opportunities, top risks, and any contradictions between what the data shows and what the roadmap assumes. AI is genuinely useful here for synthesis: summarizing patterns across hundreds of support tickets or customer calls is exactly the kind of work that benefits from automation.

Quarterly strategy refresh:

Review which bets worked, which failed, which assumptions held, and what changed across AI capabilities, competitor moves, and user behavior. This isn’t about thrashing the roadmap every few weeks; it’s a formal mechanism to adapt when the environment moves materially, producing a revised quarterly bet set.

Sprint-level execution commitment:

Teams commit to specific work only once the problem is validated, the scope is clear, and the success metric is defined. Anything further out stays as a bet or an assumption, not a fake delivery commitment.

New metrics for AI-era roadmaps

Traditional roadmap metrics still matter for human users: activation rate, feature adoption, retention, NPS, time to value.

But they’re incomplete once a meaningful share of your product usage comes from agents. Gartner named agentic AI its top trend for 2026, with 100% of surveyed enterprises planning to expand AI agent adoption, and noted that AI value requires clear value metrics and governance, not just a list of use cases.

| Metric type | What to track |

| Task success | % of agent tasks completed without human correction |

| Reliability | Error rate, failed tool calls, fallback rate |

| Human intervention | Escalation rate, correction rate, override rate |

| Value delivered | Time saved, tickets deflected, workflows completed |

| Agent discoverability | Successful API/MCP calls, tool invocation quality |

The implication I’d push hardest: instrumentation and evaluation belong on the roadmap alongside features. For AI product work, a release isn’t “done” when it ships; it’s done when the team can monitor output quality, understand failure modes, and improve the system. Roadmapping the observability layer is not optional.

Three product roadmap examples worth taking notes from

Buffer: public Kanban with community-driven validation

What I like about Buffer’s roadmap is that it turns a communication tool into a feedback loop. Eight columns (New, Blocked by API limitation, Exploring, On Hold, Planned, In Progress, Beta, Released) make it easy for anyone to see exactly where an initiative sits and why. The built-in upvoting and comment section means customers are actively validating priorities rather than waiting for a quarterly newsletter.

ClickUp: thematic organization and transparent progress tracking

ClickUp’s roadmap solves the “what does this feature have to do with the strategy?” problem by organizing everything into themes (admin capabilities, visibility and reporting) tied to yearly priorities. Users can drill into quarterly release notes to track progress without chasing a PM for an update. It’s a good model for teams that want external transparency without losing the strategic context.

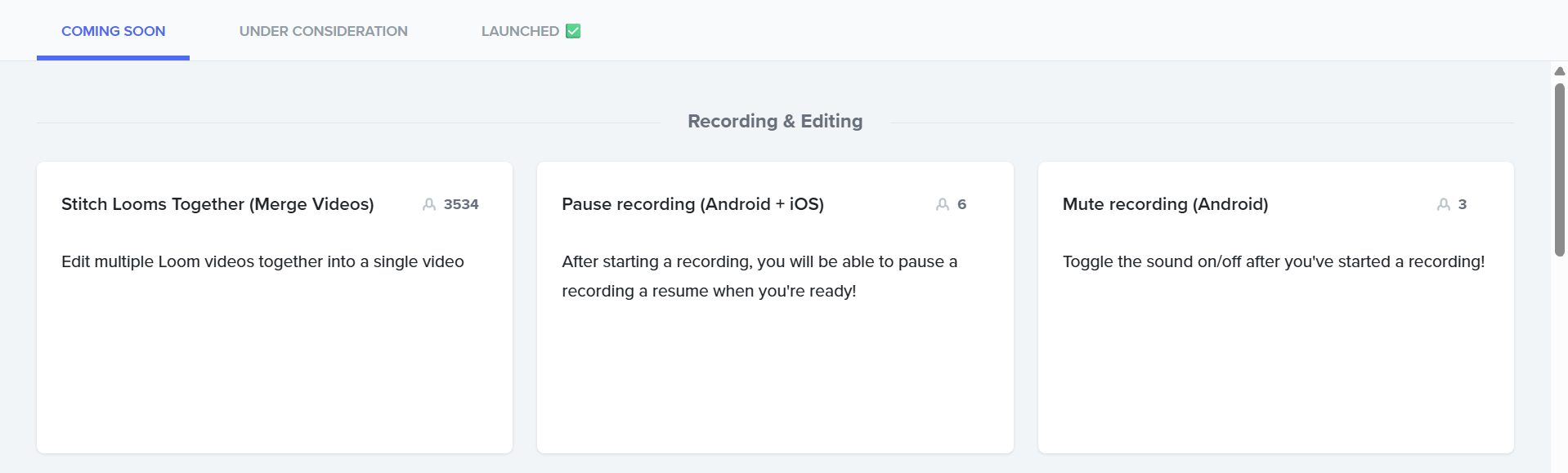

Loom: Now/Next/Later with three clean buckets

Loom’s roadmap uses three categories (Launched, Coming Soon, Under Consideration) with feature tags, upvotes, and thematic groupings. Three buckets sounds almost too simple, but it’s exactly what keeps a roadmap maintainable without becoming a full-time job. The built-in idea submission means customer insights feed back continuously rather than in a batch at planning time.

All three share the same underlying logic: transparency about priorities builds more stakeholder trust than secrecy, and public feedback loops produce better signal than internal guesswork. The format is secondary to the commitment to reasoning out loud.

Build a roadmap that guides decisions, not just plans

The best product roadmaps in 2026 aren’t the most detailed ones. They’re the ones that make trade-offs visible, surface assumptions before they become mistakes, and adapt when the environment moves. Whether you’re running a Kanban-style external roadmap like Buffer or a quarterly bet structure internally, the discipline is the same: clear business goals, defined ownership, outcome metrics rather than feature counts, and a regular cadence for asking what’s changed.

Userpilot gives product teams the data layer to make that discipline real: behavioral analytics to validate what users actually do, in-app surveys to capture what they say, and session replay to close the gap between the two. Book a demo to see how teams use Userpilot to keep their roadmap grounded in real usage, not assumptions.

FAQ

What would cause a product roadmap to fail?

A roadmap fails when it’s treated as a static document or feature wish list, built on assumptions instead of customer data, or lacks clear goals, ownership, and validation from real user data for continuous improvement.

What are the 5 stages of product management?

The five stages are: idea generation, product definition, development, launch, and post-launch monitoring. Each stage builds on the last, from ideas to actionable plans to measurable outcomes.

What is the first phase in a product roadmap?

The first phase of product roadmap planning is to define objectives, i.e., clear goals that anchor features to business outcomes and user needs.

How to create a roadmap in Excel?

You can create agile roadmaps in Excel using tables, timelines, or GANTT-style charts. However, it’s limited to collaboration and tracking. Dedicated product roadmap software makes it easier to update, share, and align internal and external stakeholders.

![10 Free Product Roadmap Templates You Need in 2026 [+Free Download] cover](https://blog-static.userpilot.com/blog/wp-content/uploads/2026/01/10-product-roadmap-templates-you-need-in-2026-free-download_d8269f0b0b9749f6ad7b519ab7ac1833_2000-1024x670.png)