Product Management in 2026: Is AI Product Management a Lie?

There’s a joke going around PM communities right now: “Last year I was a PM with one product. This year I’m a PM with three. No, I don’t get paid more.” I’ve heard variations of it from at least five people this year, including one PM at a logistics company who was technically titled TPM but was running five squads across two portfolios and seven applications. That’s the scope a CPO had at most companies a few years ago.

I’m Abrar, a PM at Userpilot. Most of what I read on “what is product management?” in 2026 is still describing the 2019 version of the job, and it’s bothered me enough that I wanted to write something closer to what I see in my own week and across the PMs whose accounts I read closely.

Product management is still the practice of figuring out what to build, getting it shipped, and learning from what happens after. Martin Eriksson’s business × technology × user experience Venn is still the cleanest one-picture summary of the discipline, and I haven’t seen anyone improve on it.

Meanwhile, the practice has changed drastically amid the popularity of AI. Three things shifted at once over the last 18 months. Scope, because PMs are owning more products with the same or smaller headcounts. Tooling, because AI compressed execution work by something in the range of 15 to 25%, which is meaningful but nothing like the 10x most leadership keeps assuming. And structure, because what used to be one job is splitting into two or three tracks that the market is starting to price very differently.

This post is my attempt to put all three shifts in one place. It draws on a year of reading what working PMs say about their week, the public arguments Marty Cagan, Shreyas Doshi, Andrew Ng, and Lenny Rachitsky have been making about where this all heads, plus what I see day to day building product analytics features at Userpilot.

What is product management in 2026?

Product management is the practice of figuring out what to build, getting it shipped, and learning from what happens after. That definition has held since Martin Eriksson first drew his business × technology × user experience Venn over a decade ago. The core of the discipline still sits squarely in the middle of those three circles. Find problems worth solving. Decide what to build, for whom, and against which alternatives. Ship it. Measure what happens. Iterate.

The Eriksson model still works. What it doesn’t show is the shape of the role around the model, and that’s what’s changed in 2026.

A PM in 2018 typically owned one product, worked with one engineering team, and spent the bulk of their week writing requirements and chasing alignment. The PMs I see in 2026 are more often owning three to five product lines, working across two or three squads, prototyping their own first versions of features, and spending most of their week on judgment calls nobody has time to write down. The official job description hasn’t caught up, while the texture of the role has.

Two forces are doing most of the reshaping.

- The first is AI compressing parts of the work. Customer discovery synthesis, competitor analysis, first drafts of product vision docs, and prototype building. All of it happens in hours now instead of weeks. The realized efficiency gain is real. GitHub’s research on Copilot found developers cut project-management time by about 25% and reinvested it into core coding, while METR’s 2025 randomized trial of 16 experienced open-source developers found AI tools slowed them down 19% on the median task, even though those same developers thought they’d sped up by 20%. The realized PM-level gain I see consistently lands in the 15 to 25% range on execution work. It is not the 10x leadership panic emails keep assuming.

- The second is the role itself, splitting. Marty Cagan has been writing about this in A Vision For Product Teams, arguing that the Product Owner role as it currently exists is being absorbed by AI plus engineers running more of their own discovery. Andrew Ng put the other half of the same shift more plainly in a Lenny Rachitsky interview last summer: “Product management is becoming the new bottleneck. I don’t see PM work becoming faster at the same speed as engineering.”

Both of them are describing the same underlying movement from different sides. The role is doing more of itself in less time, while the version of the role that was mostly coordination quietly disappears from underneath everyone.

💡 Read related blog posts: The product management process in 2026: a stage-by-stage guide

Product management vs. project management (and what both are losing to AI)

I think the textbook distinction still applies and is worth saying out loud, because most “what is product management” articles get it wrong:

- Product management owns the why and the what. Why this problem is worth solving. What we’re going to build, for whom, and what we expect to be true after we ship.

- Project management owns the when and the how. Sequencing, dependencies, status, keeping the train on the tracks.

In a healthy org, you have both. In 2026, you increasingly have one person doing both, and that person is the PM. The reason is structural. Teams are getting leaner. PMs are owning more product surface area. Project coordinators and scrum masters are getting cut or absorbed into product roles. The work has been reassigned.

Both disciplines are also losing their lowest-judgment work to AI. Backlog grooming, ticket writing, status updates, dependency tracking, and sprint reporting. All of that work is migrating into AI agents inside Jira, Linear, and Atlassian’s MCP integrations. What’s left after the migration is the part neither AI nor a project template can handle: deciding what matters, and getting humans to commit to it.

And that’s exactly the shape you see when you zoom out to the 2026 hiring data. The lowest-judgment work is being compressed inside existing teams and quietly disappearing from the openings companies are willing to fund in the first place.

What is the 2026 PM job market telling you?

When I pulled the 2026 numbers, the story of splitting stopped reading like a narrative I’d told myself and started reading like market consensus.

The May 2026 global job tracker, which Lenny Rachitsky’s State of the Product Job Market covers in detail, puts the worldwide PM job count at 24,895 open roles, up 1.8% month over month and 14% year over year. Most regions are growing. Canada is up 56% YoY, the UK around 22%.

The supply side, though, is brutal: roughly 880,000 people on LinkedIn currently or previously held a PM title and are open to work, against those ~25,000 listed openings. That’s a 35:1 ratio, and it’s the math that explains the ghosting, the take-home culture, and the desperation you see in PM communities right now.

The more interesting number is the level mix. Senior PM hiring is up 20% year over year. Leadership hiring is up 22%. Associate and mid-level roles rebounded modestly month over month, but stay the soft part of the market. Growth is concentrated at the top of the ladder, which is exactly where you’d expect it if companies were betting on fewer, more experienced PMs covering a larger surface area.

If you’re hunting for a PM role in 2026, here are three trends that are worth tracking.

- Remote is back. May 2026 saw remote listings jump 28% in a single month, the biggest gain in two years of this tracking. The US is driving most of the rebound. One pattern worth knowing if you’re job-hunting in North America: US companies are increasingly posting remote PM roles in Toronto and elsewhere in Canada, paid in CAD through local entities at roughly a 30% effective discount. The “remote reset of 2024” is reversing fastest in senior roles, which fits if you’re competing for a thinner pool of experienced product builders.

- Take-home assessments are in crisis. The longest thread I’ve seen in PM communities all year is on exactly this. Candidates report spending 8 to 40 hours on take-home cases, getting ghosted, occasionally watching the company ship the feature they proposed months later. On the hiring end, there is also an increasing number of submissions that are now AI-generated. So many hiring managers now prefer live use-case discussions and seeing how applicants approach thinking with the help of AI, through live prompting sessions. Nevertheless, take-home assessments are increasingly treated as disrespectful for applicants above the director level, and several CPOs are refusing to do them at all.

- “AI Product Manager” titles are a minefield. The strongest career-warning thread I’ve read this year argued that 70 to 80% of jobs posted as “AI PM” are not really product roles. They’re implementation or solutions engineering roles dressed up with an AI label. You configure prompts for enterprise clients, design conversation flows, and manage deployments of whatever model the engineering team built. Two years into one of these roles, your résumé says AI PM, but your work history reads as customer success, and the infrastructure-AI roles you’d want to move into expect an ML background you don’t have. The diagnostic question that the thread offered, which I think is the best single question to ask in an AI PM interview:

“Ask what the last three things this team shipped were, and who decided to build them. If the answer is engineering or the client, you’re not in product.”

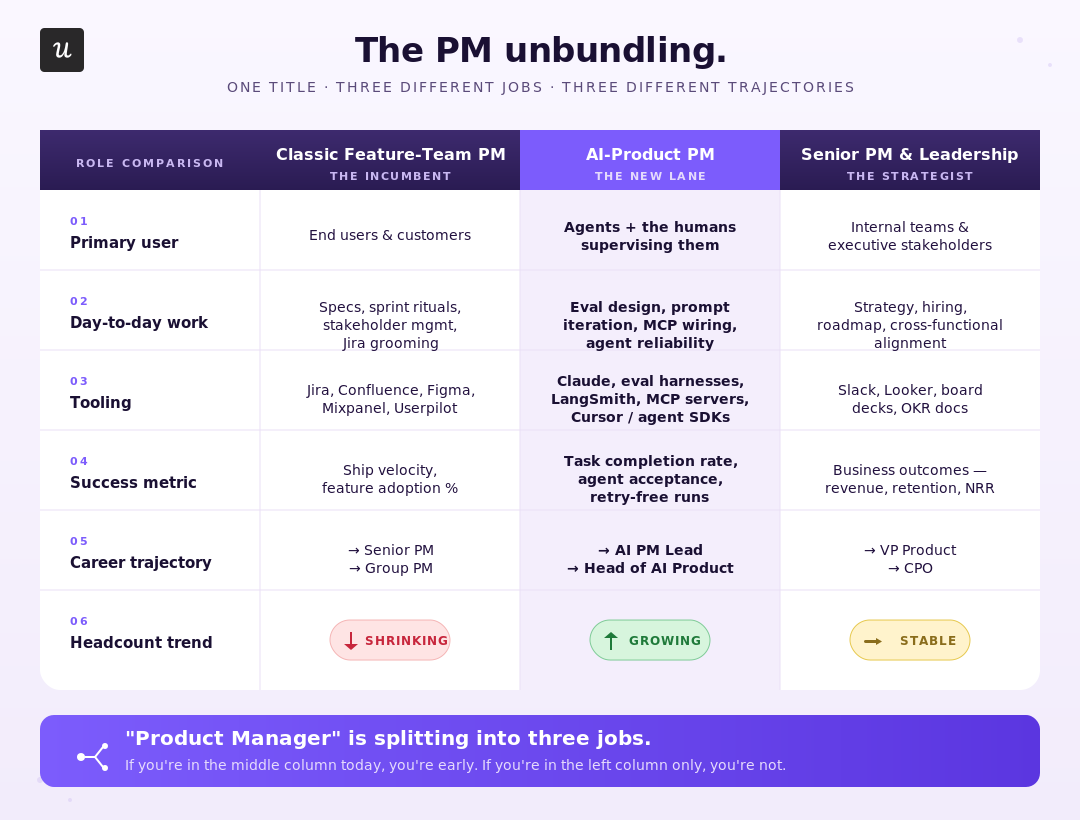

Why is the PM role unbundling? Three career tracks emerging in 2026

A product manager is no longer a generalist sitting between business, engineering, and design in 2026. That model is breaking under the weight of three different jobs starting to operate under the same job title.

These three tracks aren’t entirely new. But the differences among them, which used to be marginal, are now structural.

Track 1: The classic feature-team PM (shrinking)

The version of the job that most “what is product management” articles still describe. You own a slice of the product, work with one engineering team, write PRDs, run discovery, ship features, and measure adoption. Most PM bootcamps still train for this role. Most company hiring funnels are still built around it.

It’s also where the job market data looks the worst. Mid-level PM hiring is the soft spot in the market, and the parts of the role that live here are the ones AI is absorbing fastest: backlog grooming, ticket writing, sprint coordination, and requirement translation. Cagan has been writing the eulogy for the Product Owner role on exactly this argument for two years. The feature-team PM is next on the same axis. The work isn’t disappearing as much as it’s getting consolidated into fewer people who own more surface area.

If you’re a feature-team PM in 2026, the practical question I’d sit with is what you do in a day that an engineer with Claude Code in the next room can’t do faster.

Track 2: The AI-product PM (growing fast, mostly mislabeled)

The track everyone wants to be on, and the one where most job postings do not offer what they advertise.

The real version of this track owns an AI-native product or feature. You define what good output looks like for a probabilistic system. You write evals. You decide acceptable error rates and design fallbacks for when the model fails. You measure task completion instead of clicks. It’s a real specialty, demand for it is genuine, and the people doing it well are getting paid accordingly.

The trap is that most companies hiring for “AI PM” don’t have this work to offer. They have an OpenAI or Anthropic API wrapper they want shipped to enterprise clients, and they need someone to configure prompts and design rollout flows. That’s a valuable job, but it’s a customer success or solutions engineering job with a product label on top. The harshest read I’ve seen, which I broadly agree with: the only legitimate AI-product PM roles are at the four or five companies building foundation models, plus a small number of well-funded application-layer startups solving genuinely novel problems. Everyone else is API plumbing.

The career trap is worth taking seriously. Two years of “AI PM” experience that’s actually CSM work doesn’t transfer cleanly into either lane. The infrastructure-AI roles want an ML background. The traditional PM roles read your résumé as customer success. You can specialize in a corner.

The bigger pattern I keep coming back to is that we’ve been through this title cycle before. Mobile PM, Web3 PM, Crypto PM, Growth PM, Data PM, and even Gamification PM. Each one had its moment as a separate specialty, and each one quietly merged back into “just product management” once the underlying tooling became baseline. The AI PM title will probably go the same way within two years. The narrower question worth asking right now is whether the role you’re being offered is one of the genuinely AI-product roles, or whether it’s the temporary specialty title that’s about to dissolve.

Track 3: Senior PM and product leadership (stable, AI literacy as table stakes)

The senior end of the role is the most stable, and also the most demanding it’s been in a decade.

If you’re a senior PM, group PM, or director in 2026, you’re expected to own strategy and outcomes the way you always did, plus prove AI literacy on top of it. That means having a point of view on agentic workflows, knowing how to evaluate AI-native features your team ships, sometimes maintaining your own side projects to demonstrate fluency, and translating between executive-level AI panic and what your team can deliver in a quarter.

I brought this up with Yazan Sehwail, Userpilot’s CEO, a few weeks ago, and he shared the same thought:

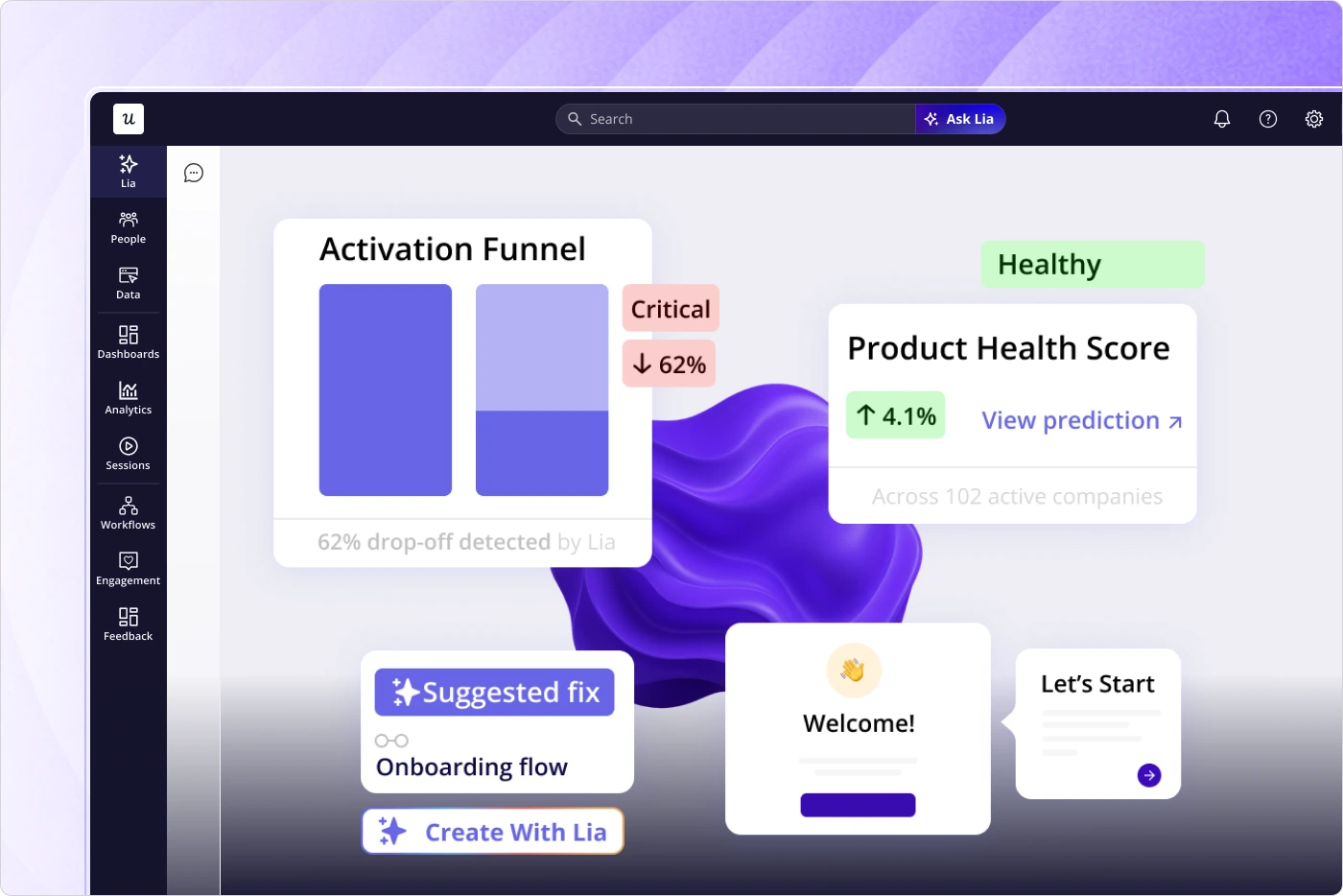

“Instead of every quarter, you’re releasing one or two features, now you’re releasing seven, eight, nine. It becomes even harder for product teams to manually track each one, understand usage for each one, and come up with hypotheses and insights on each one.”

This is also the part of the role our own product team at Userpilot has been rebuilding the platform around. The design principle Yazan keeps coming back to is: “you change from an operator to monitoring. The AI is operating. You’re just evaluating and monitoring the agent workflow.”

That’s our philosophy behind Lia, our AI product agent. You hand Lia a project goal — improve trial-to-paid conversion, reduce churn, increase adoption of a feature you just shipped — and it builds the funnel reports, runs the segment analysis, surfaces the drop-off, runs predictive models on who’s likely to churn or convert, and points to what’s worth acting on. The senior PM stops being the person who builds the dashboard and becomes the person who decides what to do about what the dashboard says.

That’s also why one of the most durable PM skills in 2026 is still managing upward. This fits my experience that the strategy as written in strategy docs is rarely the strategy as practiced. In practice, most senior PM time goes into translating leadership recency bias, sales-driven HiPPO mandates, and the latest agentic-AI announcement into something the team can ship without burning out.

Senior PM and leadership headcount is up 20 to 22% YoY. That’s where the market is investing.

And the quiet fourth track: the Product Engineer

Worth an honorable mention because at smaller companies, especially in consumer and AI-native startups, the boundary between product and engineering is dissolving. One PM I read described their team displacing Figma with Replit, after which the design team tried to absorb product as a function. The “Product Engineer” title is starting to appear in JDs: someone who can reason about user problems, design the experience, and ship the code.

It’s not a clean replacement for the PM role. It is, increasingly, the structure that wins at the seed and Series A stage. So I think it’s worth watching over the next two years.

How has AI changed PM work?

If I had to summarize how AI shifted my own week in 2026, it’s about a 15 to 25% efficiency gain on execution, no change on judgment, and an entirely new failure mode for teams that confuse the two.

The product management workflows that have genuinely moved

These are the use cases I see consistently mentioned by PMs whose accounts I trust, and that I’ve validated in my own week:

- Customer research synthesis. Recording 20 to 30 customer research calls, getting transcripts, clustering themes across them, and drafting a first-pass synthesis. A week of work in an afternoon.

- PRD and strategy drafting. Used as scaffolding for the doc. The PMs getting value here treat AI output the way you’d treat a junior PM’s first draft: review hard, push back, rewrite the load-bearing claims yourself.

- Competitor and market analysis. Deep research across a target list, mining public RFPs, summarizing competitor docs, and changelogs. Two days of manual work have become two hours of review.

- Prototyping. The biggest single workflow shift of 2026. Claude Code, Cursor, Replit, Figma Make, Lovable, and the new image-gen tools have made the cost of a working prototype low enough that the question “should I prototype this or write a spec?” has flipped for most teams.

- Testing and edge-case exploration. A use case that crept up on me. Pointing Claude Code at a new flow as a guest user and watching it click through is now the fastest way to surface edge cases I’d never have thought to write down. Less polished than dedicated QA, but it catches a meaningful share of the misses.

- Internal tools. A growing share of PMs are vibe-coding their own custom dashboards, roadmap visualizers, PRD chatbots, and analytics endpoints. The advanced version is running a production-replica MCP against your source code, so the agent can answer business questions without you bothering an engineer. Ideally, you can have this many of small daily agents: one that digests yesterday’s sales calls into a top-objections digest (and replaces the manual call-auditing that used to take a day a week), one that turns a competitive-landscape research request from 3 days of work into 30 minutes, one that spins up landing-page variants for A/B tests, one that pulls analysis from internal databases without bothering a data analyst. You don’t have to bother about the latest model or the hottest new tool. As long as the output is consistent and the outcome is right, I’m not switching.

- The career maintenance layer. Self-evaluations, peer reviews, OKR drafts, stakeholder updates, and release notes. The unglamorous admin layer of the job has lost most of its time cost.

The pattern across all of these is the same. AI did what AI is good at: synthesis, summarization, and structured generation. The PMs reporting the biggest leverage are the ones using it on the specific tasks where the cost of being slightly wrong is low and the time savings are high.

One caveat I’ve watched myself fall into, and that comes up in every PM thread on this topic. The PMs who vibe-code six different tools in their first AI-enthusiastic month end up as the human API between their own tools. The dashboards don’t talk to the PRD chatbot. The PRD chatbot doesn’t talk to the analytics endpoint. You spend the time saving and copying and pasting context between things you built yourself. The version of this that compounds, in my experience, is a smaller set of tools that share context, not a larger set that each works in isolation. Pick two or three that solve bottlenecks, integrate them properly, and resist the urge to keep spinning up new ones every time Claude Code makes it easy.

AI slop and grounding problem for the failure mode

The deepest critique I’ve seen in PM communities this year is called AI Slop. PMs writing PRDs with AI, leads using AI to summarize them, engineers using AI to build from them, and nobody thinking through the problem at any step in the chain. More pointed put:

“PMs forgot why they write PRDs in the first place. It was never about the document. It was about forcing yourself to think through the problem.”

The structural reason this is happening is simple. AI sped up the parts of the role that produce artifacts. It did not speed up the part that produces judgment. Leaders see the 5-minute PRD output, reset their expectations to the 5-minute number, and the upstream review work (the load-bearing assumption hunt, the political alignment, the deciding-what-not-to-build) doesn’t compress to fit. Engineering velocity ran ahead of decision velocity, which is exactly the framing Andrew Ng used when he called PM the new bottleneck.

There’s a second failure mode worth naming that lives next to AI Slop: the grounding problem. Most of the “AI is underwhelming in our company” complaints I read have very little to do with the model itself. The problem is what the model is reading. Docs drift. Tickets are outdated. Slack answers contradict each other. Features change behind flags.

When companies plug a copilot into that mess, the copilot becomes a confident summarizer of stale information, and the output feels almost right but never quite right. A better model won’t fix this. The only working solution is through building an underlying source of truth (PRDs that match what shipped, runbooks that reflect the current architecture, etc.) before any internal AI tool will produce something a PM can trust.

Shreyas Doshi has been making the same point from the opposite direction. Product sense, in his framing, is the only PM skill that will matter going forward, because the rest can be approximated by a model with the right context. I think he’s right, and the practical version of his argument is this rule: use AI as a sparring partner.

💡 Read related blog posts: AI customer communication for SaaS companies: an implementation guide

What has gotten harder for product management?

The parts of the job AI didn’t compress are the ones I think about most as a working PM. They turn out to be the parts that most determine whether a product succeeds, and they’ve gotten harder since 2023.

Go-to-market and distribution

Go-to-market and distribution are still the hardest parts of the role, and they’ve gotten worse. AI generates launch content easily. It does not build the customer relationships, partner motions, or category credibility that actually drive adoption. The bottleneck on growth at most B2B SaaS companies right now is distribution, not building, and anyone telling you otherwise hasn’t talked to a sales leader recently.

The expectation creep

The PM role was already absurd by 2022. The list of skills you needed to do it well looked like: part psychologist (to understand users), part strategist (to set direction), part salesperson (to pitch your ideas), part analyst (to make sense of data), part designer (to have a vision for the experience), part project manager (to keep things moving), and part diplomat (to navigate stakeholder politics). All while owning outcomes you don’t fully control with people who don’t report to you.

In 2026, on top of that, sits a new expectations layer: side projects, GitHub activity, prompt libraries, vibe coding fluency, an opinion on agents, and side bets on whether AI evals are a real specialty. Not to mention the bar for getting hired has gone up.

One credible prediction making the rounds is that this creep will push the strongest PMs out of corporate roles entirely. They now have most of what they need to build their own products, and AI has removed the last bottleneck, which was needing engineers to ship. The counter-argument, which I find equally credible: “indie hackers existed before AI too,” and GTM is the actual moat. Both can be true at once. Some senior PMs will leave. Most won’t, because building a business is much harder than building a prototype.

There’s also a particular leadership pattern where risk appetite is collapsing at the same time as appetite for AI features is exploding. Companies are pushing teams to “learn AI” on vague mandates while simultaneously demanding 99% certainty before any new experiment ships.

B2B and regulated reality

A worthwhile pushback I’ve seen against the consumer-AI-PM worldview comes from PMs in industrial software, hardware, fintech, and healthcare. AI compresses execution in those spaces, but it doesn’t dissolve the bottlenecks: domain depth, compliance, legacy integrations, 12 to 24-month enterprise sales cycles, certifications, and change management with risk-averse buyers. If you’re a PM in regulated B2B, the AI story is a smaller share of your job than the consumer-app threads make it sound. The moat in your world is context, relationships, and operational maturity, not vibe-coding speed.

Burnout is the modal experience

The PM community in 2026 is exhausted. More products per PM, more skills demanded, less protection from above, more leadership panic, more reorgs. If you’re a PM reading this and the day-to-day feels heavier than it used to, you’re not making it up. The role is genuinely tougher than it was two years ago, and the slack the org used to give you has mostly been reabsorbed.

What are the roles inside a product management team now?

Even with the role splitting, the way I think about a healthy product org still has a set of distinct functions. The titles matter less than the work, but the work itself is moving around.

- Product manager. Owns the why and the what for a product or a meaningful slice of it. In smaller teams, they touch the how and the when by default. They run customer research, set strategy, prioritize, and own outcomes.

- Product marketing manager (PMM). Owns positioning, launches, messaging, and the bridge from product to market. The PMM role is undergoing its own unbundling: lifecycle and onboarding work is shifting toward PMMs at a lot of companies, while the launch-machine version of the role compresses.

💡 Read related blog posts: Product marketing in 2026: what’s changed in the PLG era

- Product owner. The role most exposed to AI absorption in 2026. In the strict Scrum sense (backlog owner, sprint coordinator, requirement translator), it’s being folded into the PM role at most healthy teams I see, or eliminated entirely.

- Product designer/UX designer. Owns the user experience, interaction design, and (often) some of the research. The line between designers and PMs has blurred faster than I expected. Designers are now using AI tools that let them build functional prototypes, and PMs are using design tools that let them mock up flows without involving design at all. That blurring is exactly why the Product Engineer role is gaining traction at smaller companies.

- Product/data analyst. Owns instrumentation, dashboards, and the quantitative side of decision-making. The most underrated role in 2026, in my view. As shipping accelerates and AI products get harder to measure, the team that owns analytics ends up owning the truth.

- Engineers and tech leads. Build the product. Increasingly run their own slices of discovery, especially in AI-native teams. The relationship between PMs and engineers is shifting from “PM writes the spec, engineer implements” toward a tighter loop where both sides bring more to the table.

The shape of the team in 2026 is leaner, more cross-functional, and less title-bound than the org charts of three years ago. The work didn’t go away. It got concentrated.

The bet I’d make on product management for the next two years

A point that doesn’t get said out loud often enough: AI didn’t make the PM role obsolete, but it did raise the cost of being the PM who can’t ship without a dev ticket.

That’s the part to sit with. Eighteen months ago, the decision loop most PMs lived inside (notice problem, write spec, wait for sprint, ship, measure) was the default, and nobody penalised you much for running it. Today, the same loop is two to four times slower than what’s now possible for the PM next to you who’s set up an analytics-plus-AI-plus-no-code stack to ship in an afternoon. The cost of being the slow PM has gone up, and the reward for being fast has gone up with it.

I guess that the gap will be measurable by mid-2027 in promotion rates, in surface area owned per PM, and in time-to-shipped-experiment showing up as a real metric on review forms. The PMs who use the next twelve months to compress their decision loop are going to widen that gap noticeably against the ones still using AI mostly for self-evaluations and meeting summaries.

If you want a working view of what that compressed loop looks like in a real B2B SaaS product, book a Userpilot demo. You’ll see what activation, retention, and monetization measurement feel like when the PM doesn’t have to route every in-product experiment through engineering, and you’ll come out with a clearer picture of what the platform layer can take off your plate, possibly with the first version of the workflow this whole post is about.